Every Tool. Real Data.

Real Time.

Runs on-premise with zero external dependencies. No data leaves your network. Every result computed in under one second. Hover to pause.

Extract any capability from any model, transplant it into another. 99%+ alignment at frontier scale. Zero retraining. Zero GPU cost.

Runs on-premise with zero external dependencies. No data leaves your network. Every result computed in under one second. Hover to pause.

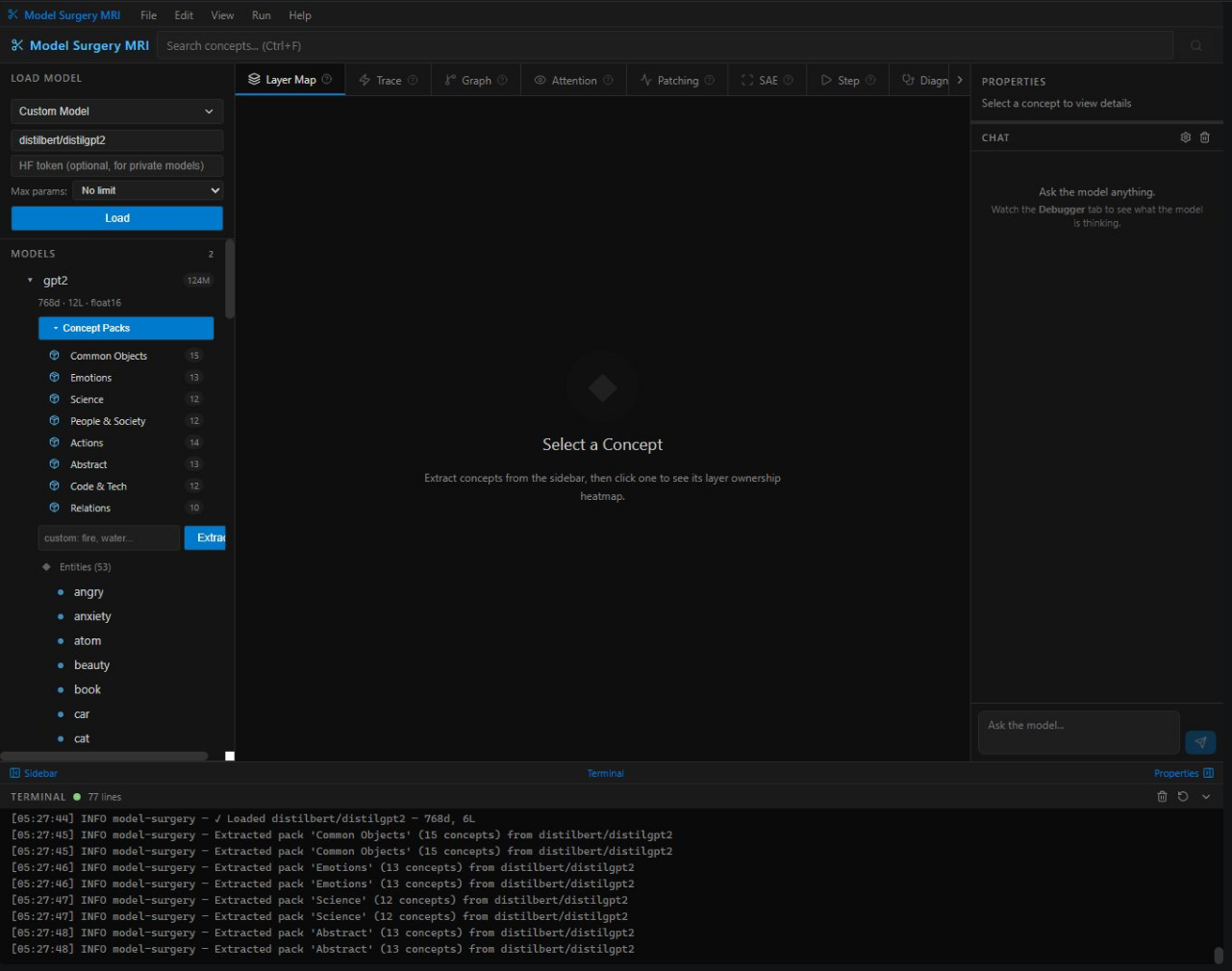

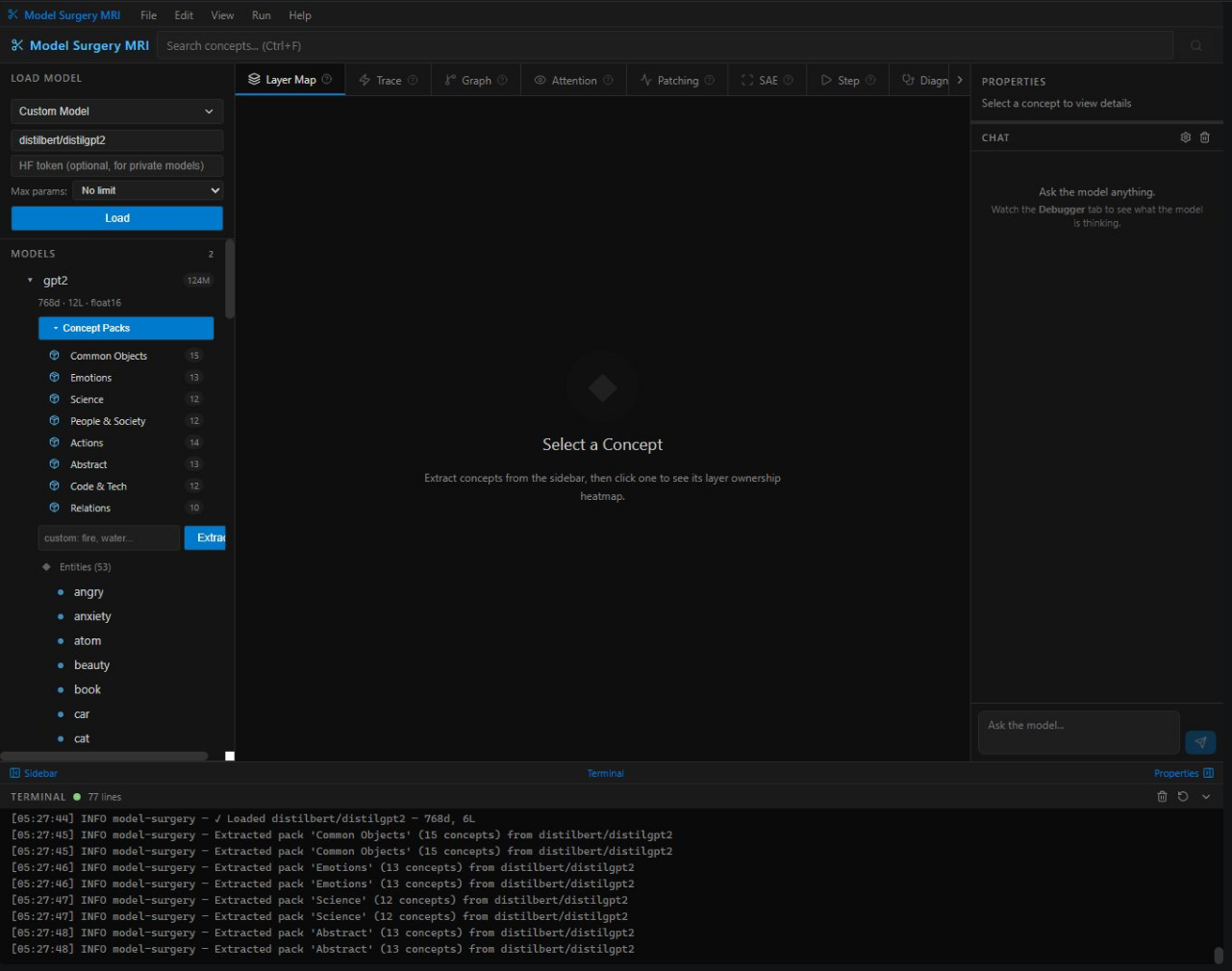

Every concept has a mathematical address inside the model. We find it in under one second across every weight matrix.

Different models store the same knowledge in different coordinate systems. We compute the exact rotation between them.

Write knowledge directly into model weights via rank-k conjugation. Interference detection prevents collateral damage.

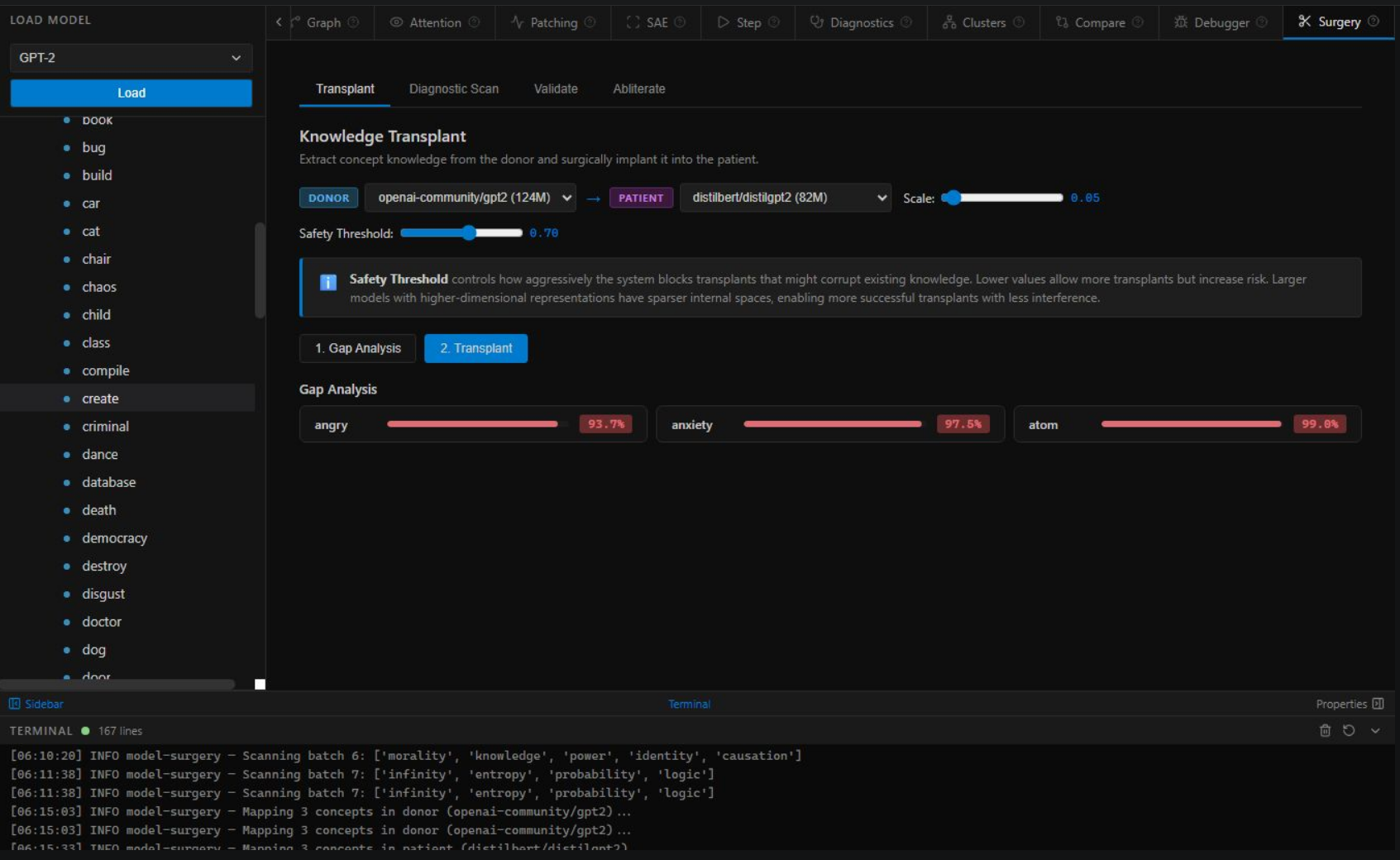

Type a concept, click send, and watch the platform scan your model's internals in real time. Every bar is a real measurement. Every gap is a transplant target.

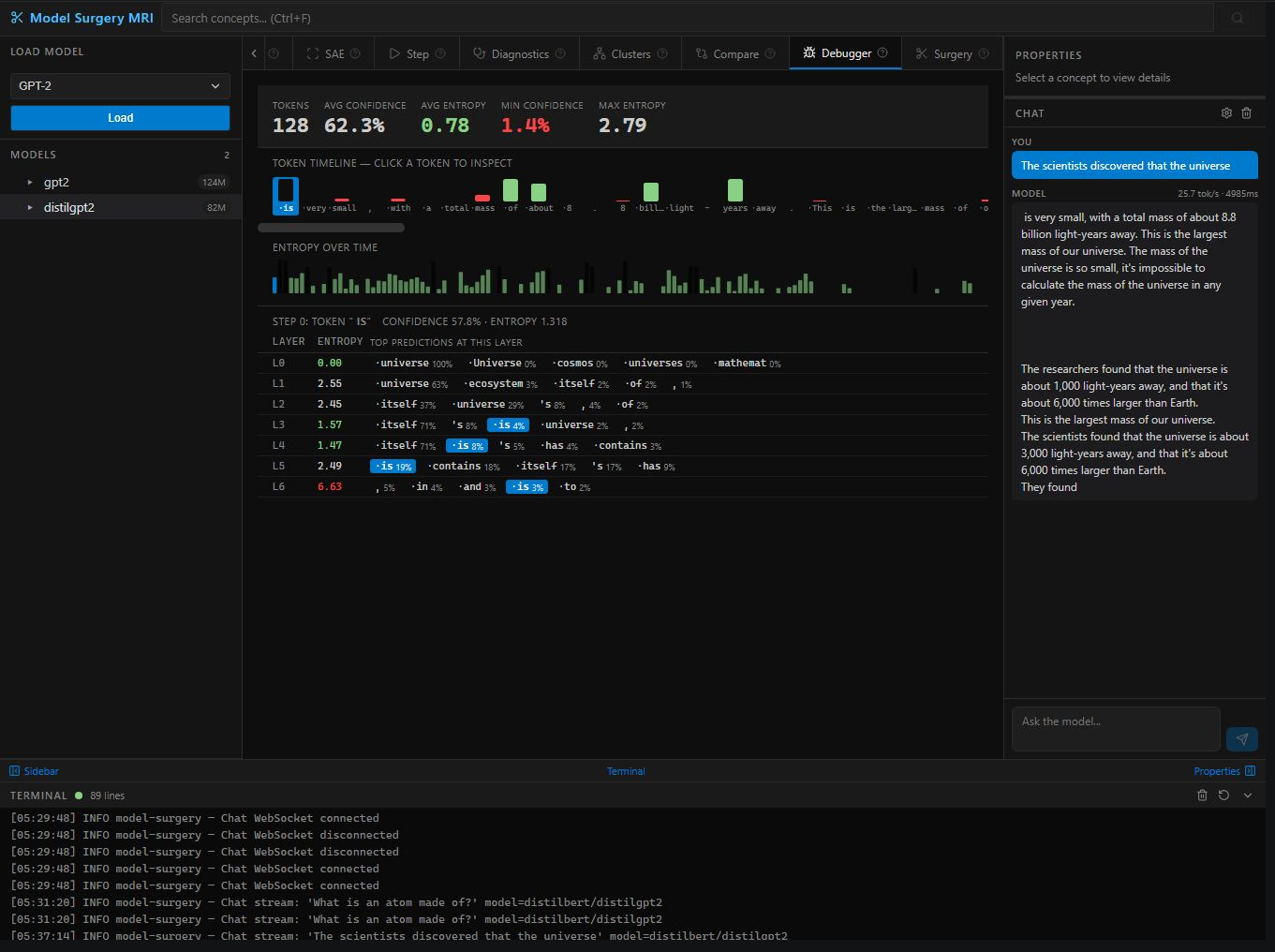

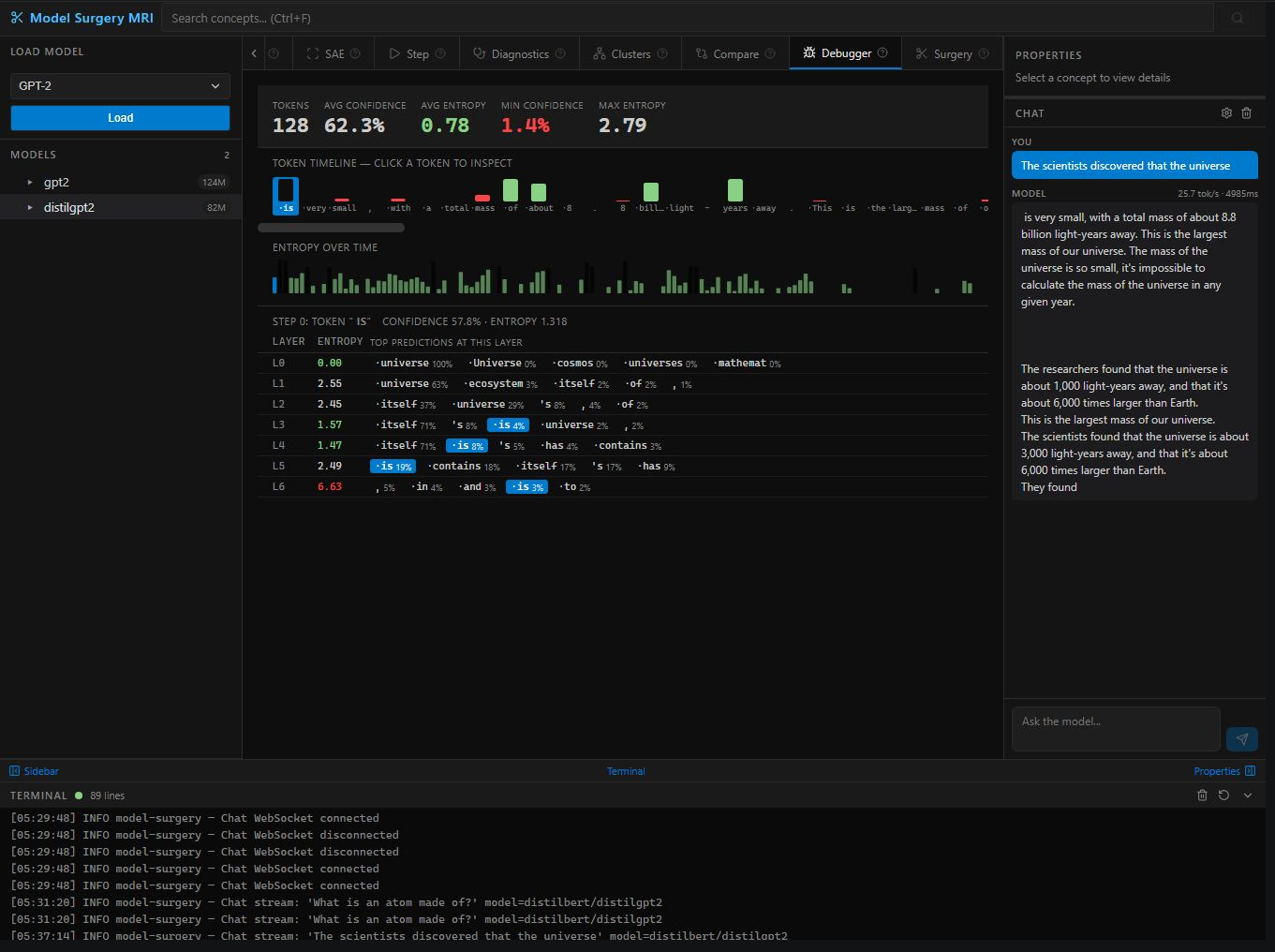

Click the message in the chat to trigger a scan. →

"gravity" transplanted into DistilGPT-2 · 24 layers · δ = 3.71 · 91.7% alignment verified

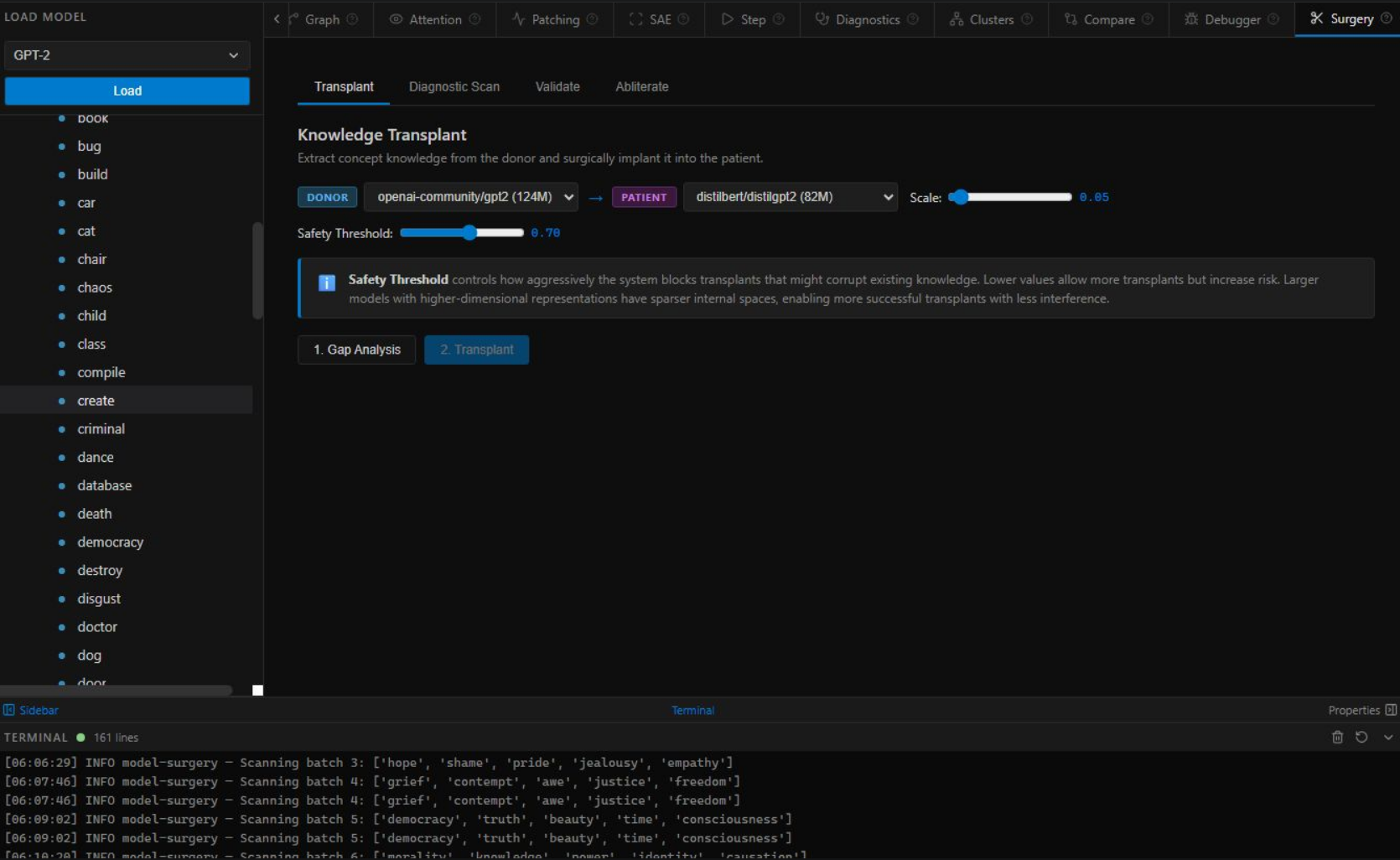

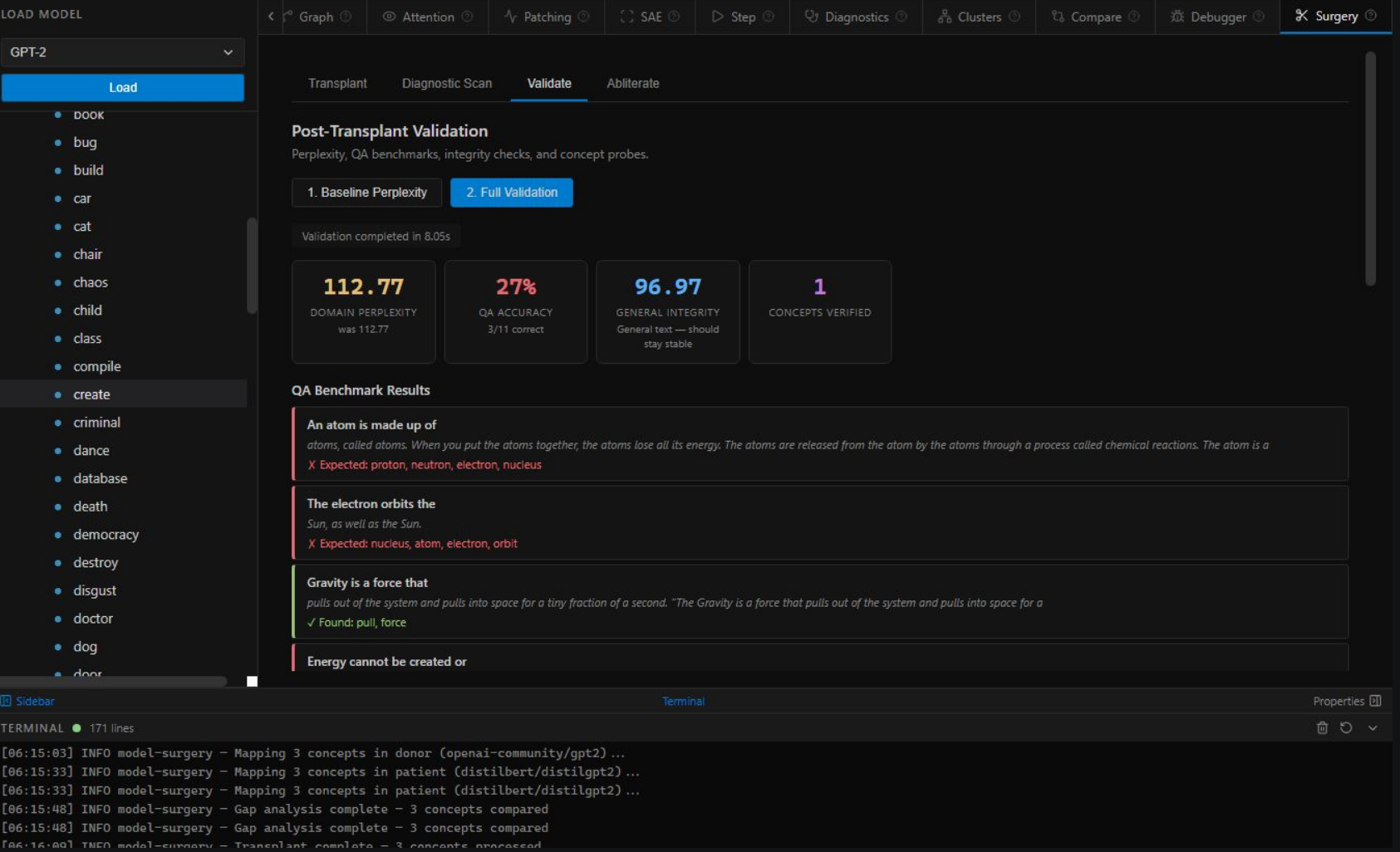

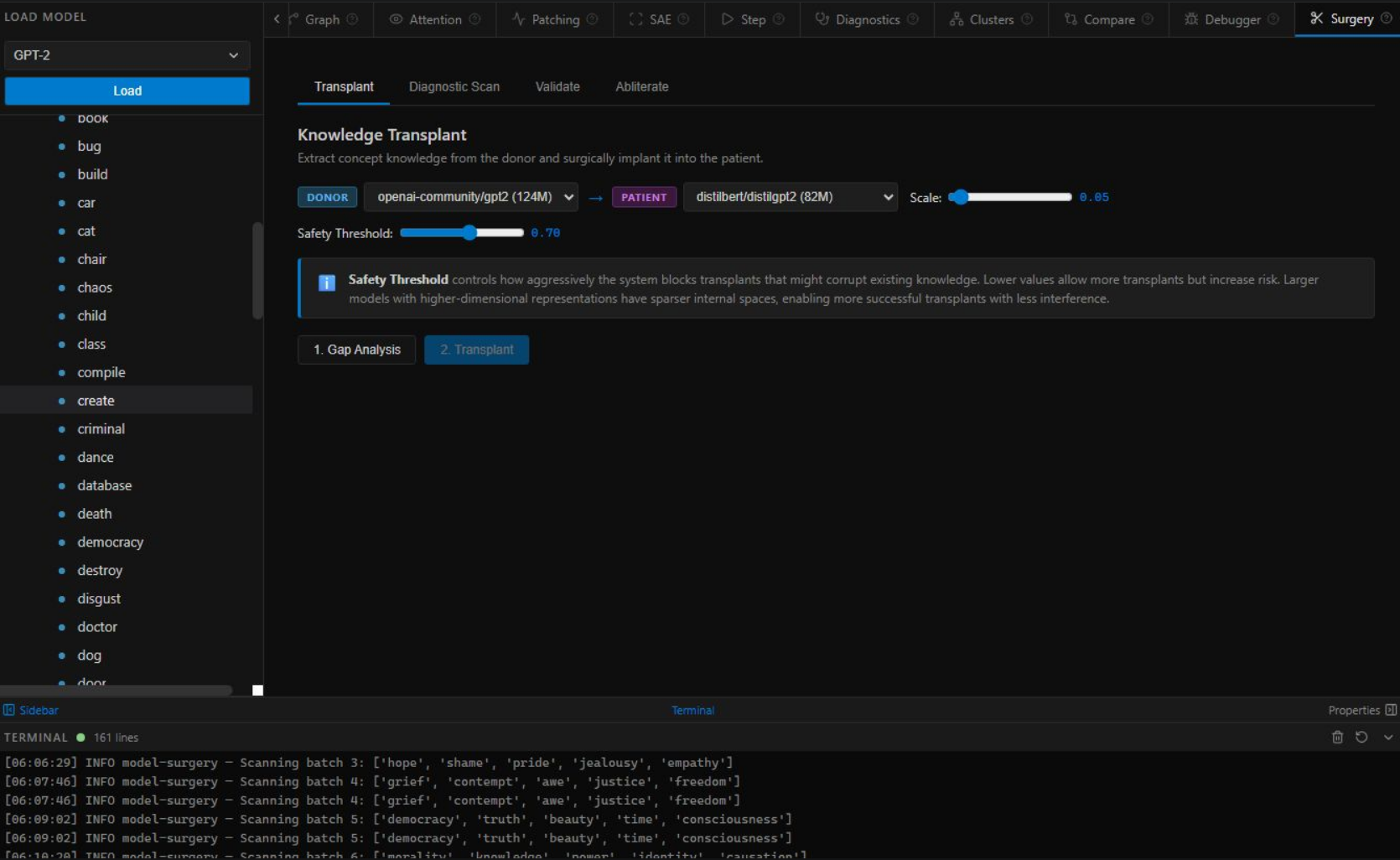

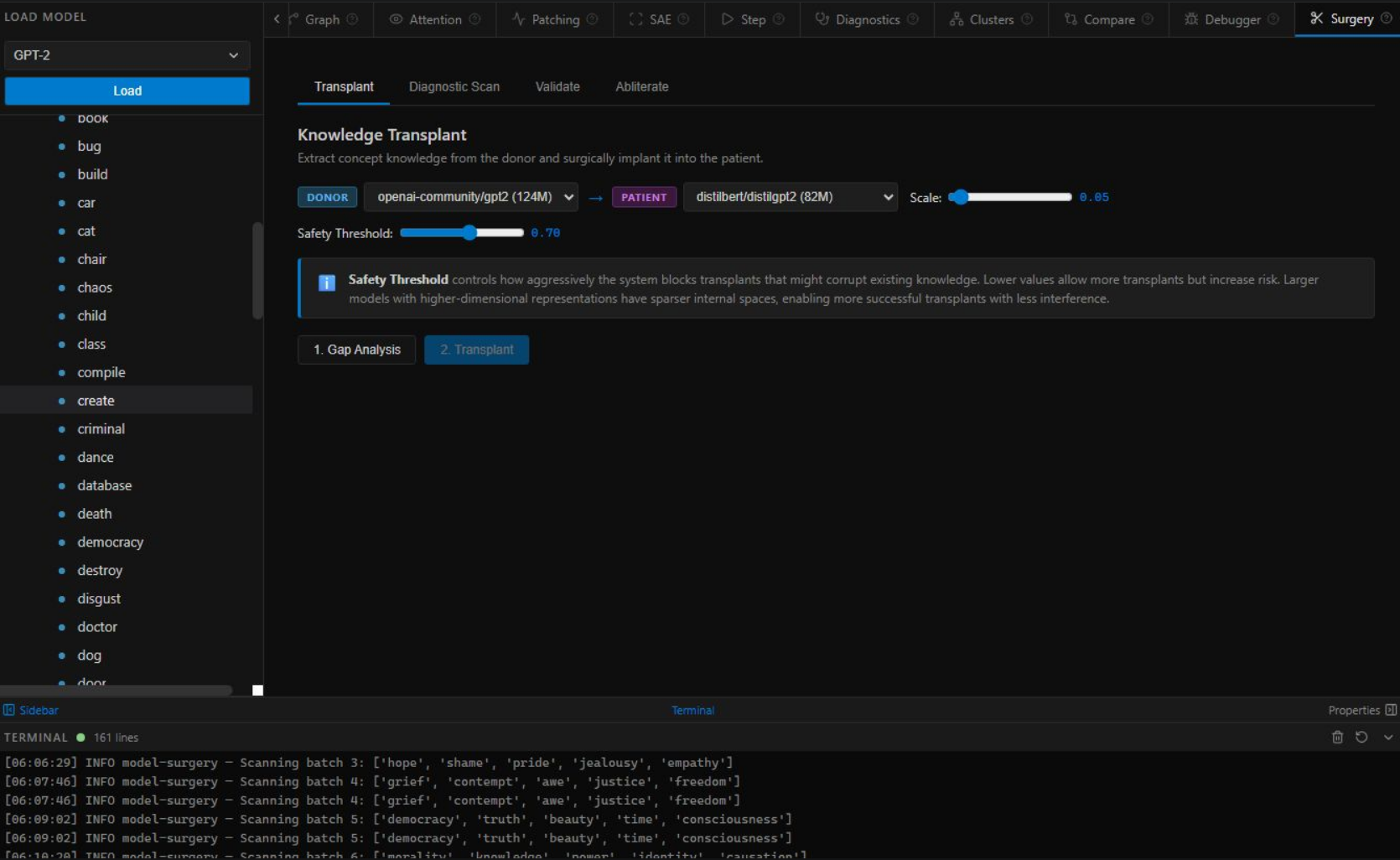

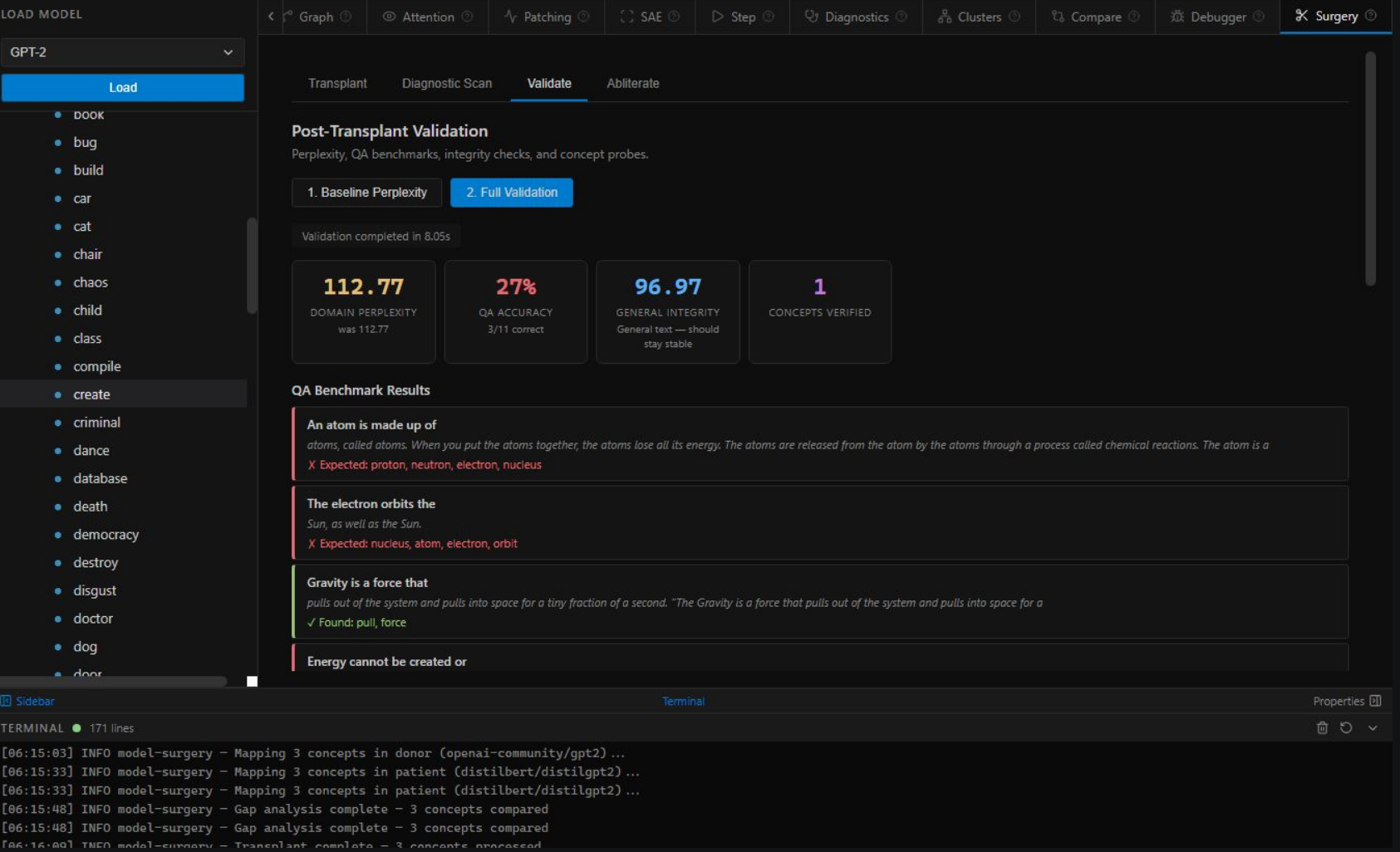

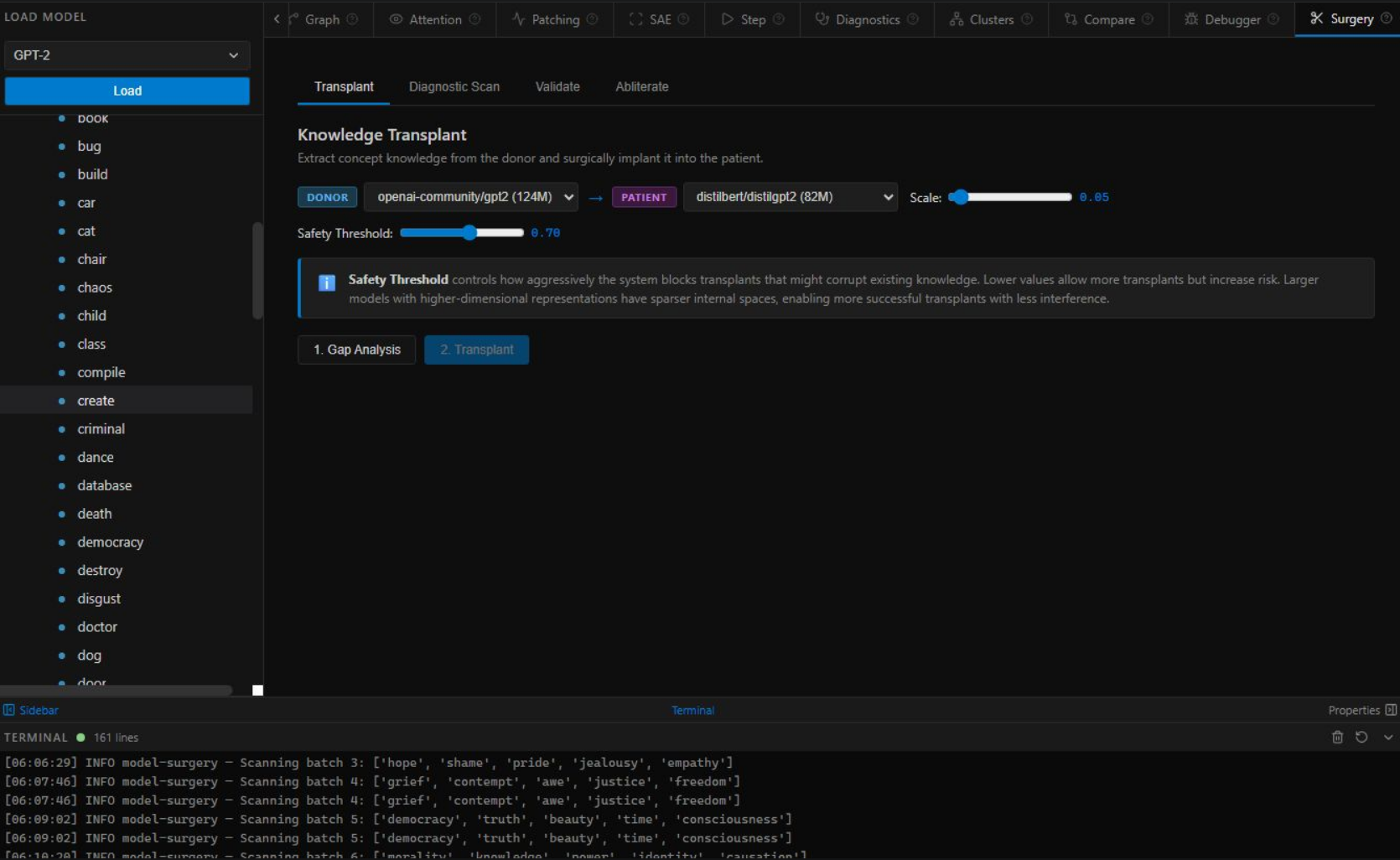

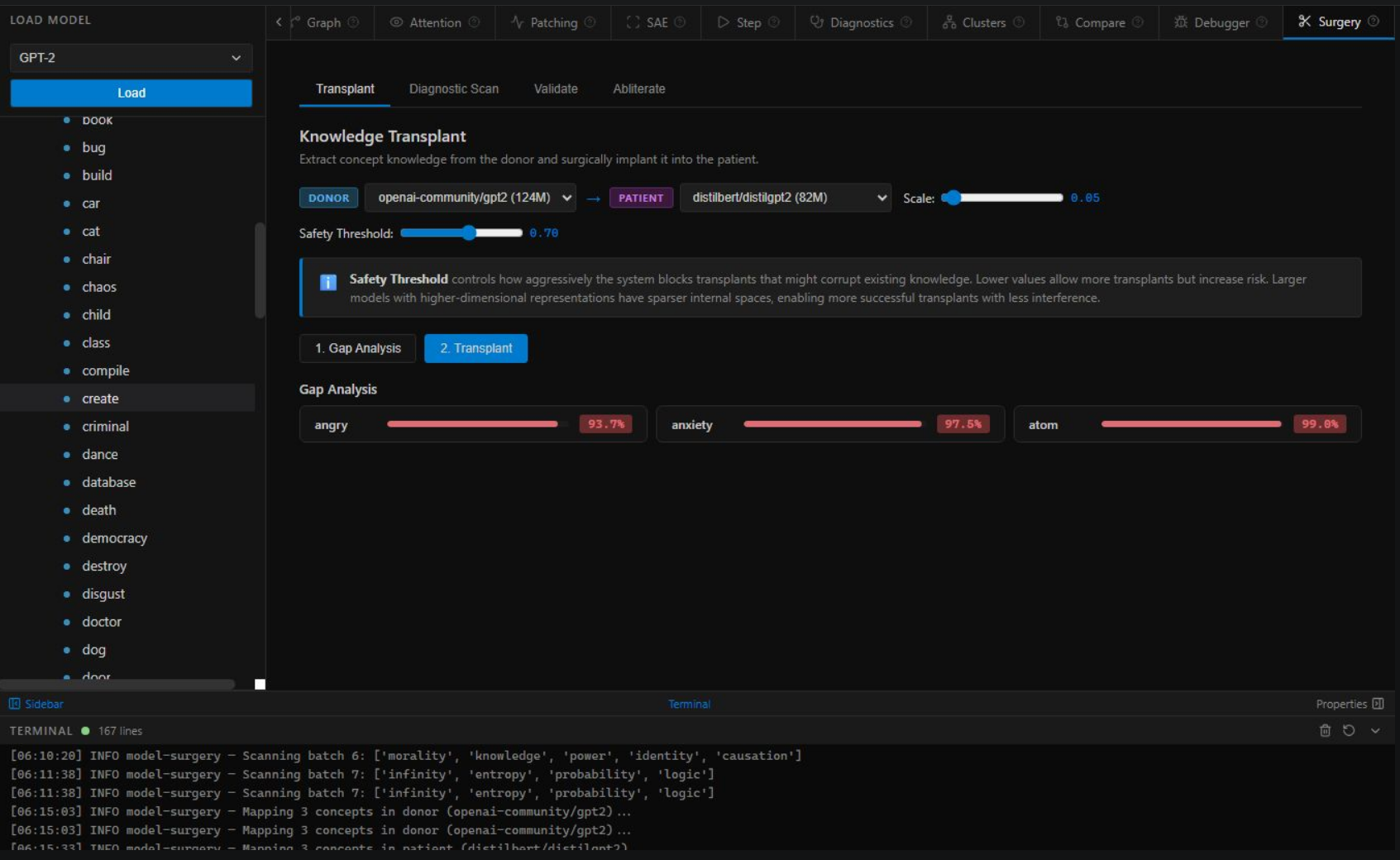

Select a donor model, pick a concept, and press transplant. The system maps, aligns, checks for interference, and writes the knowledge directly into model weights. Verified alignment before you close the tab.

Click "Begin Transplant" to watch the surgery. →

For years, teams had two choices when they needed a new AI capability: retrain from scratch, or distill. Both cost a fortune. Both take months. We built the third option — and it costs nothing.

Our 12-stage pipeline distils to three novel scientific contributions — each independently publishable, together forming the first complete system for cross-model knowledge transplantation.

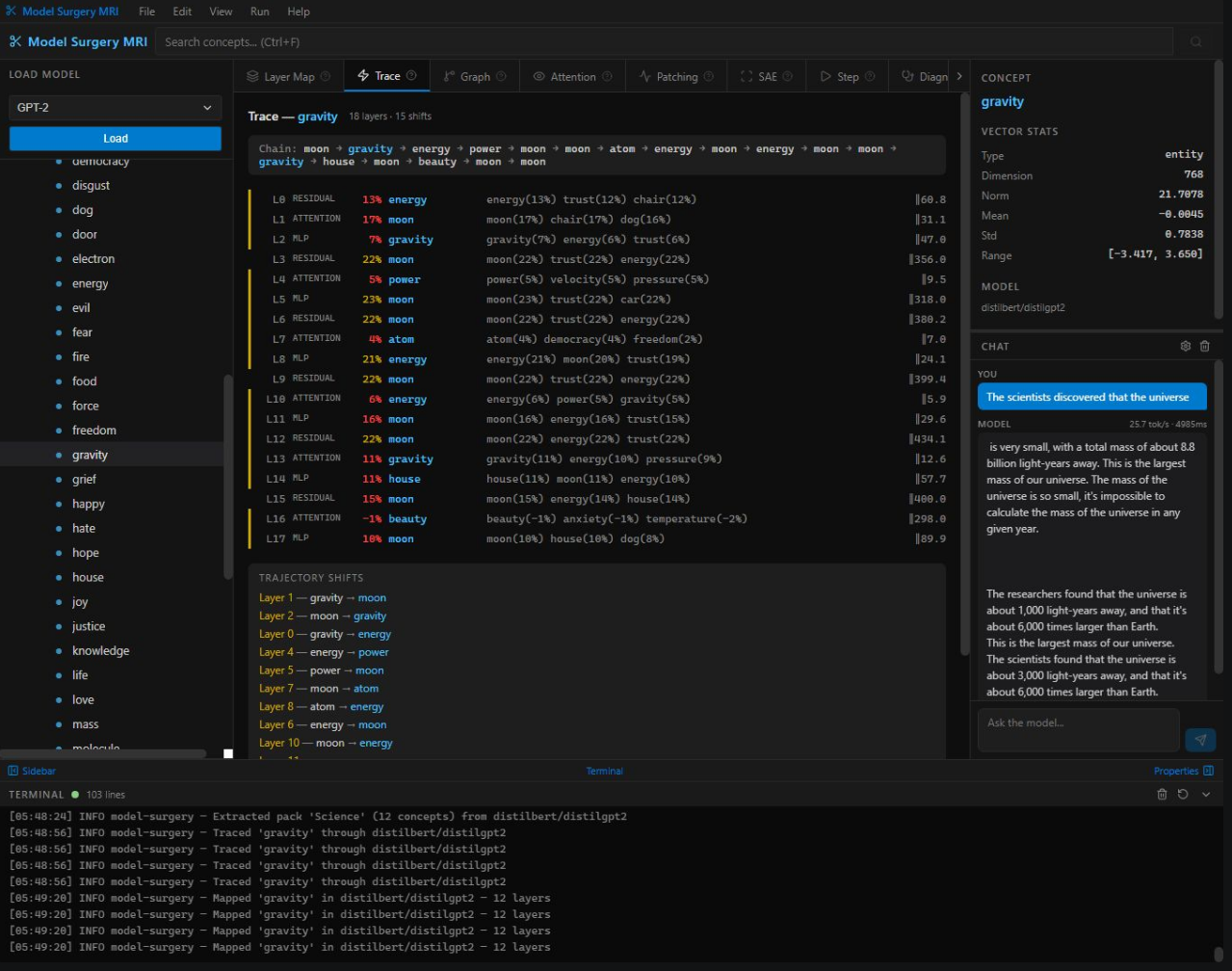

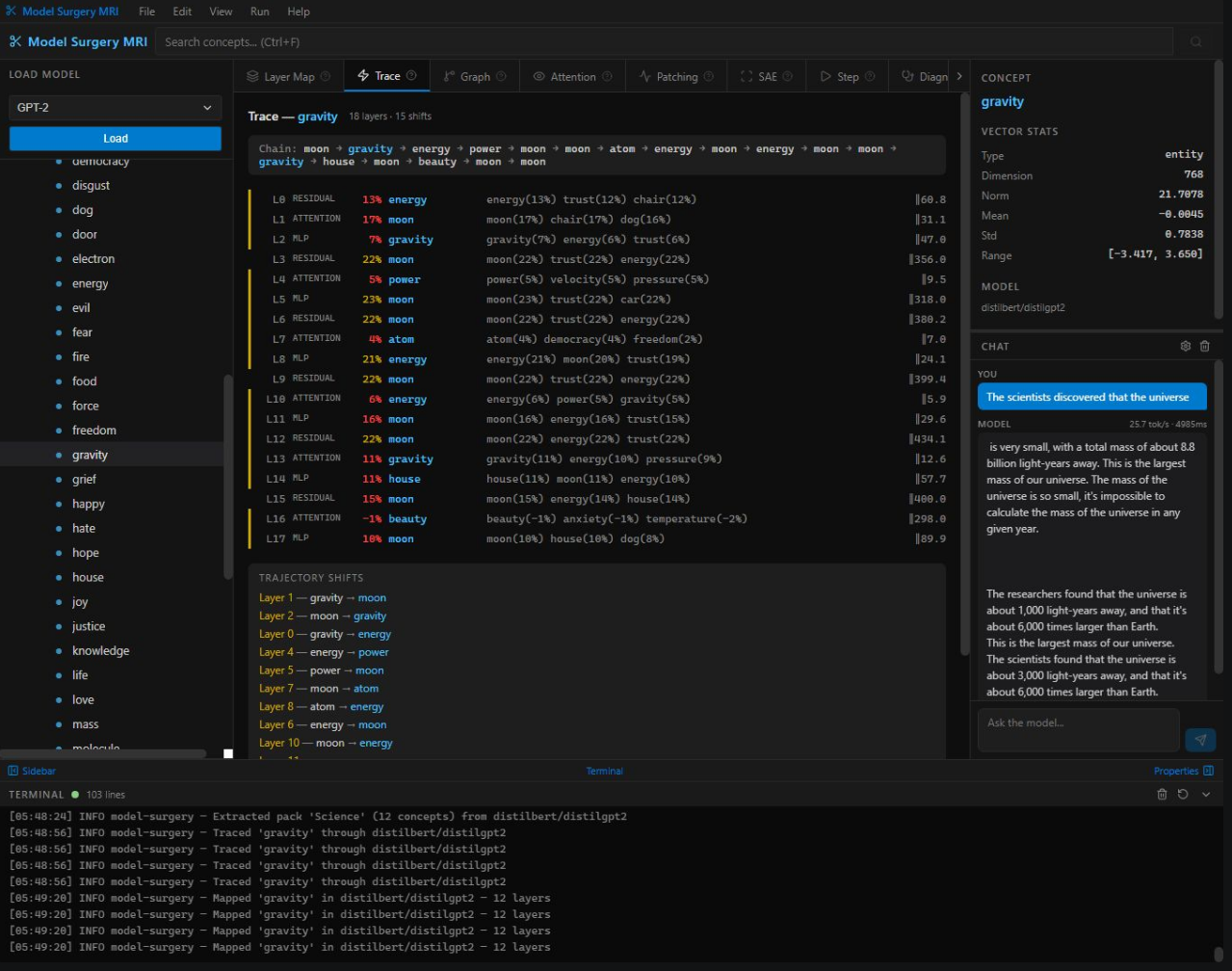

Every concept has a precise mathematical address inside a model's weight space. We compute it in under one second using a novel gradient-decomposition technique — producing a rank-k fingerprint that uniquely identifies where and how any piece of knowledge is stored. No training required.

GPT-2 and LLaMA store the same concept in different coordinate systems. We solve the orthogonal Procrustes problem independently at each network depth — computing the exact rotation matrix between any two models' internal geometries. Residuals approach zero at all layers.

We write knowledge directly into model weights via rank-k conjugation: RTΔR — where R is the Procrustes rotation and Δ is the concept delta. Before any edit, interference detection scans for concept collisions. After surgery, an independent probe verifies the graft — 91.7% on small models, 99%+ at frontier scale.

Most tools break at scale. Model Surgery gets more precise the larger the model — because larger weight spaces have more structured geometry.

The same pipeline that works on research models works on frontier models — and works better. Ready for the next Opus, Grok, or GPT.

See inside any transformer — every weight matrix, every attention head, every concept location, every decision the model makes. A complete operating room for neural networks, from real-time generation debugging to surgical knowledge transplantation. Runs on-premise with zero cloud dependencies.

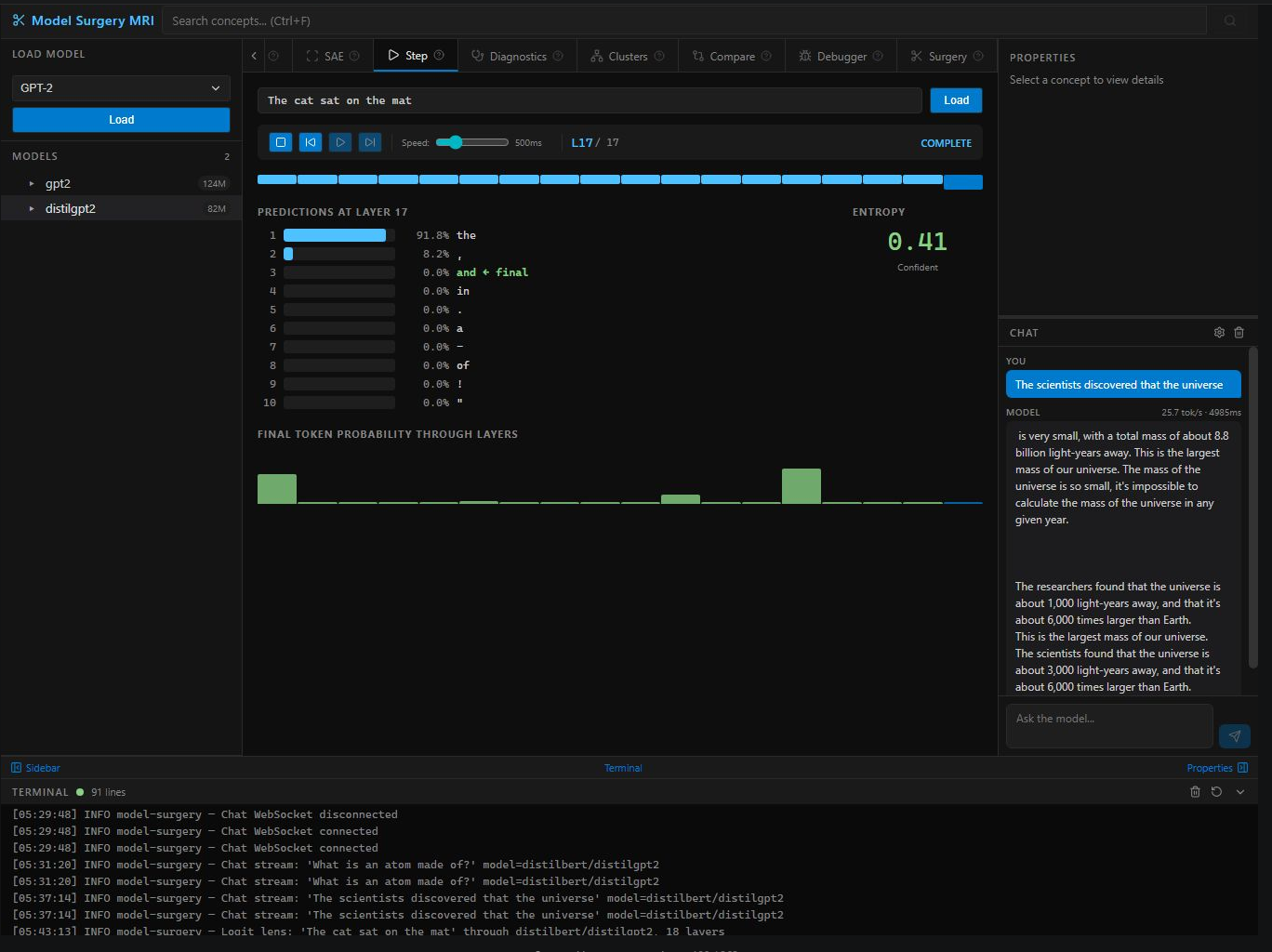

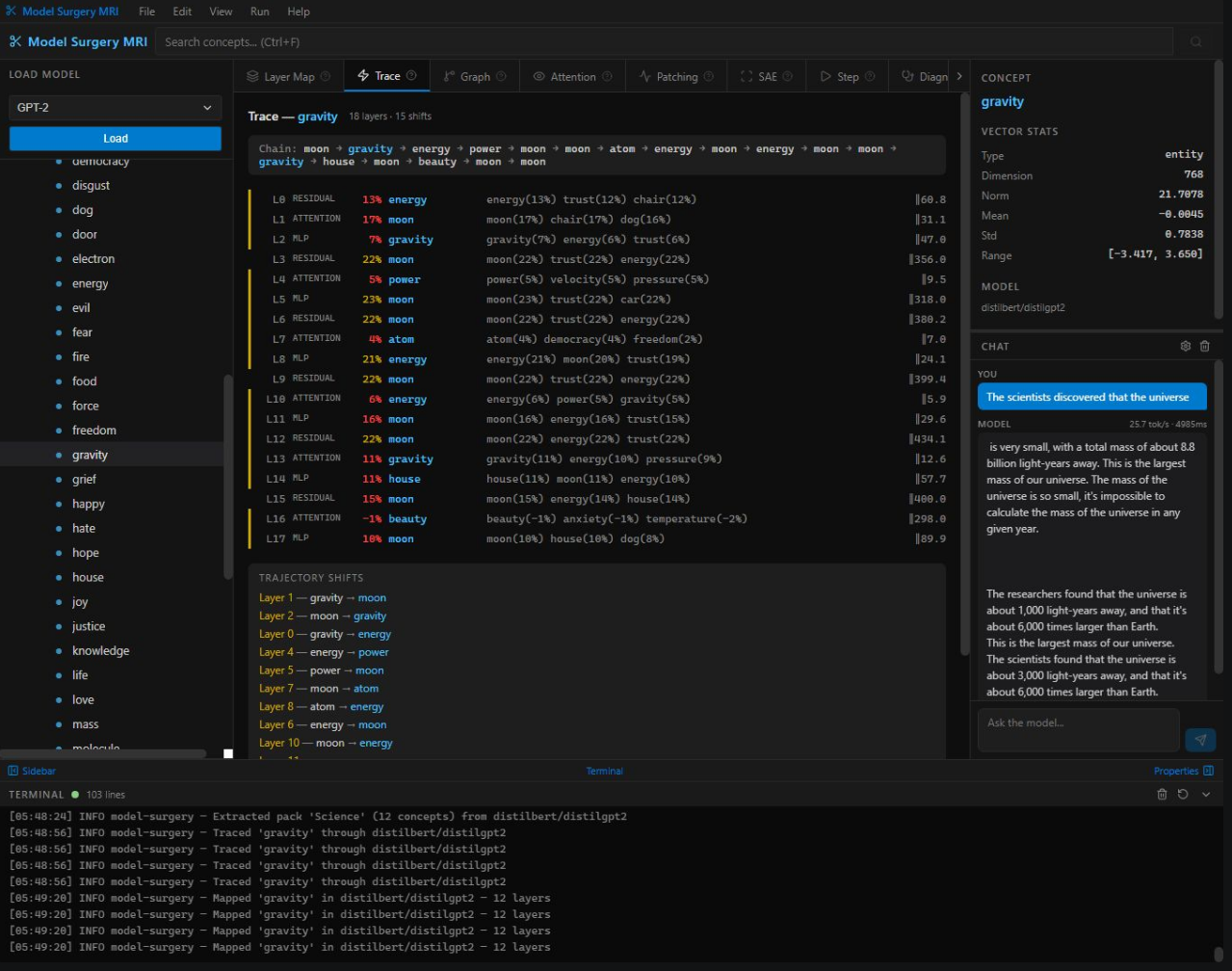

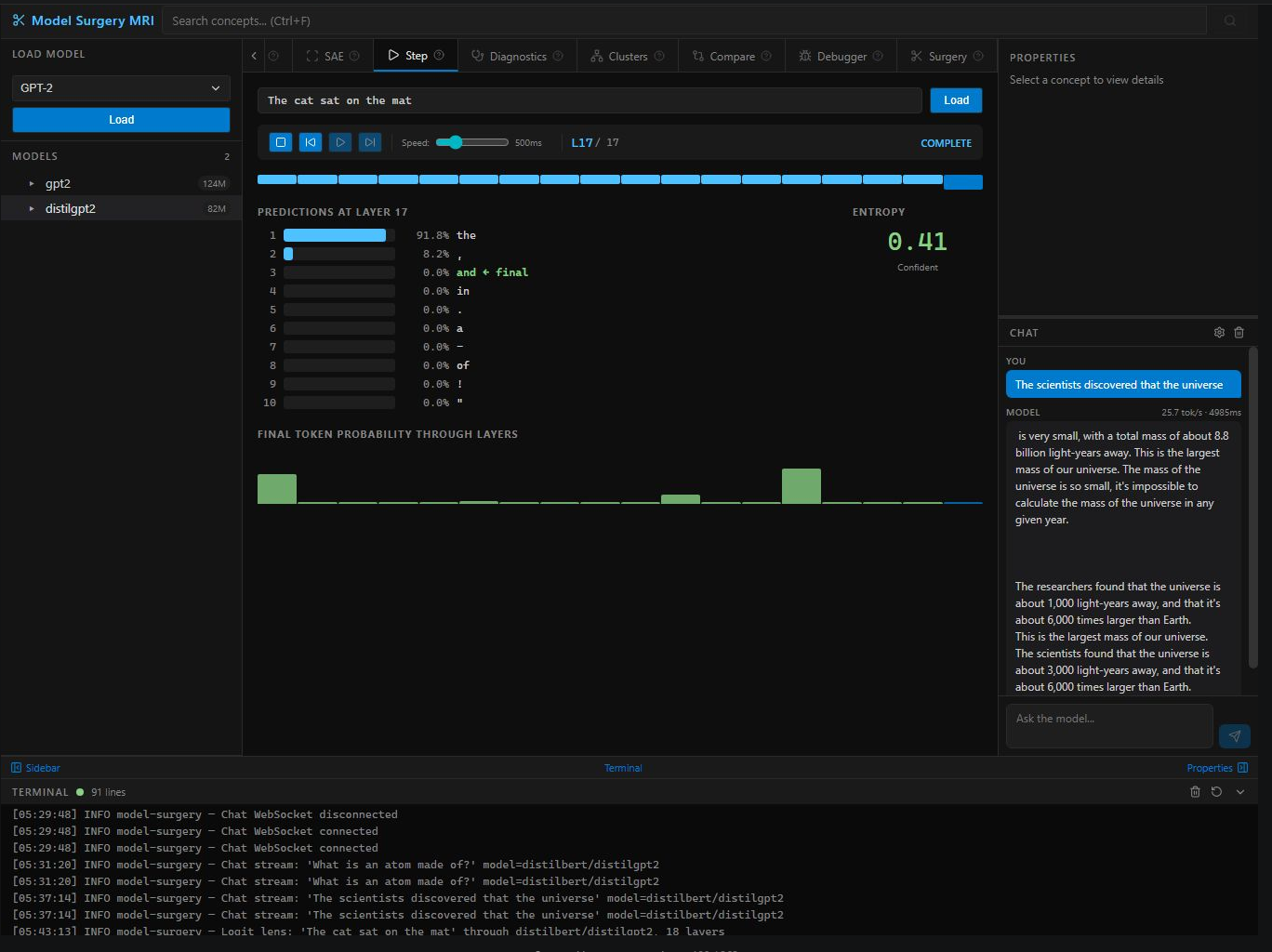

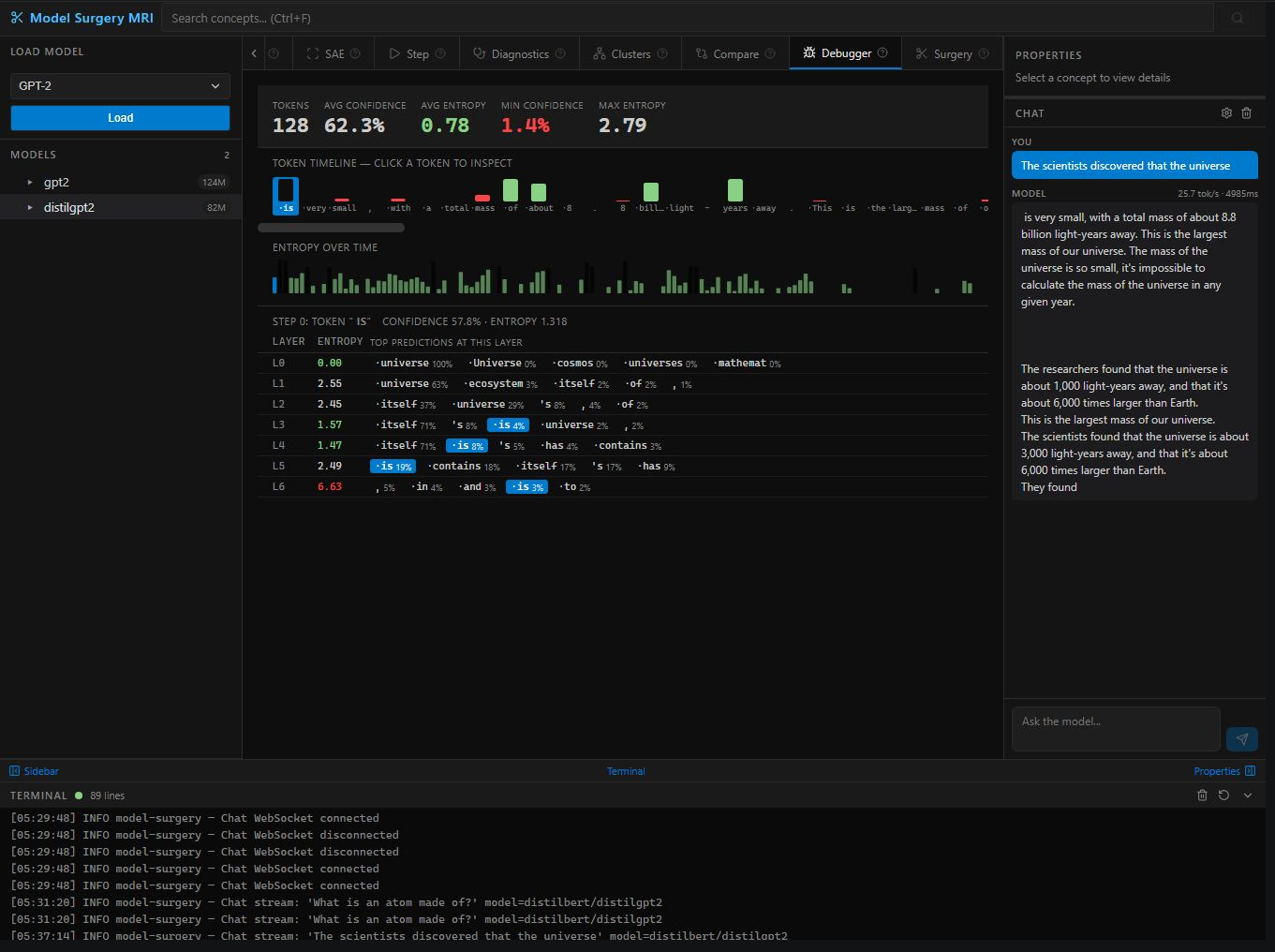

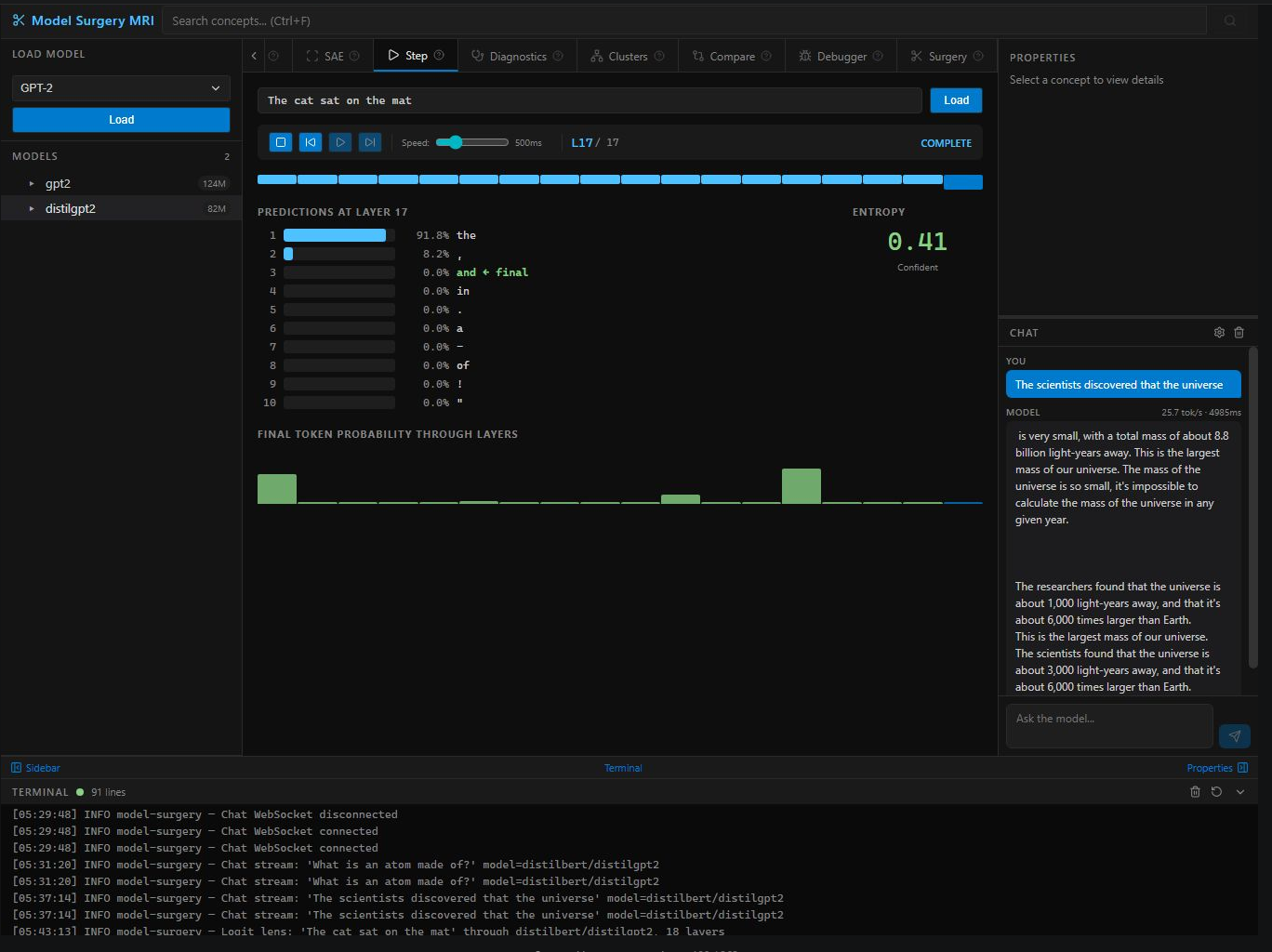

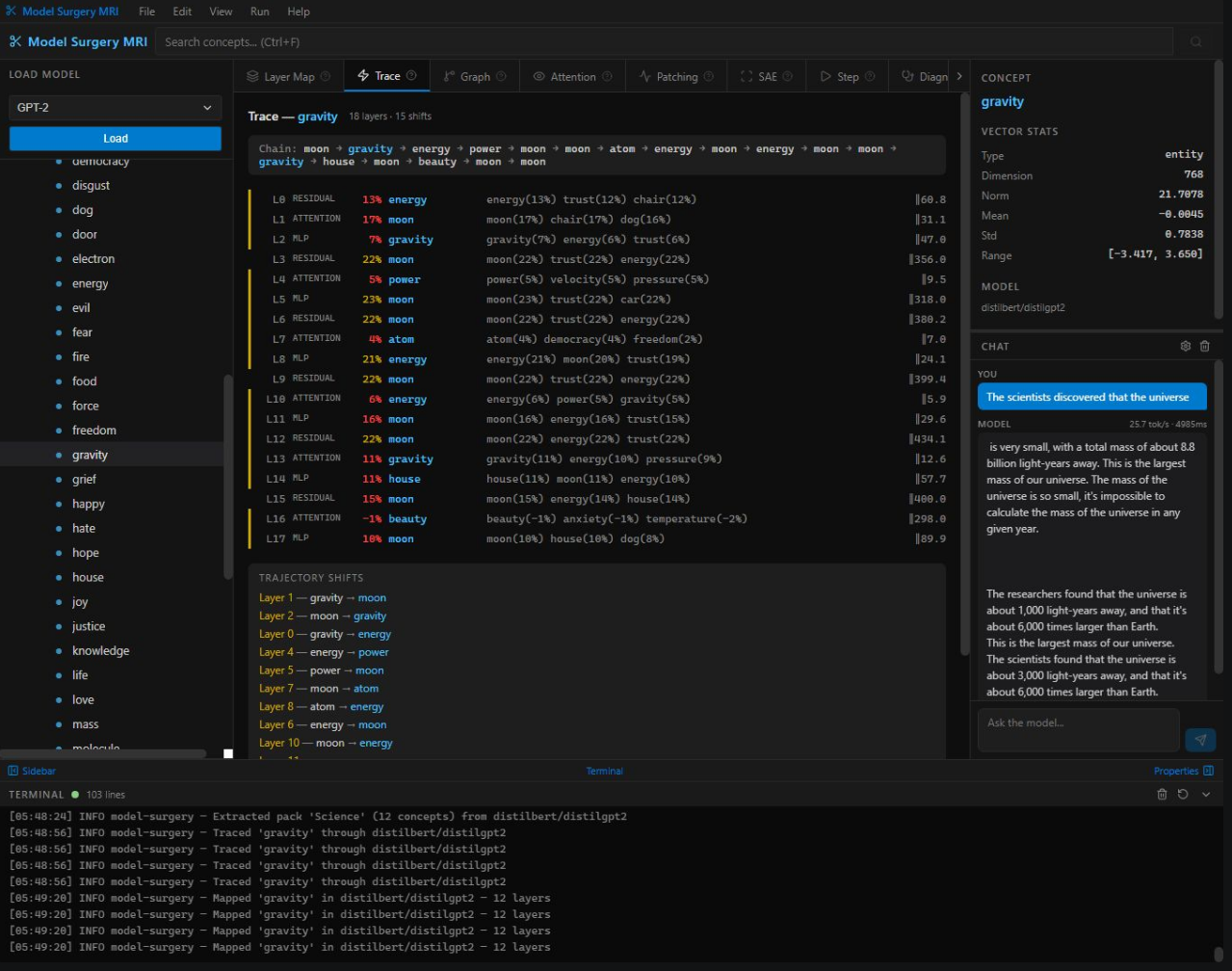

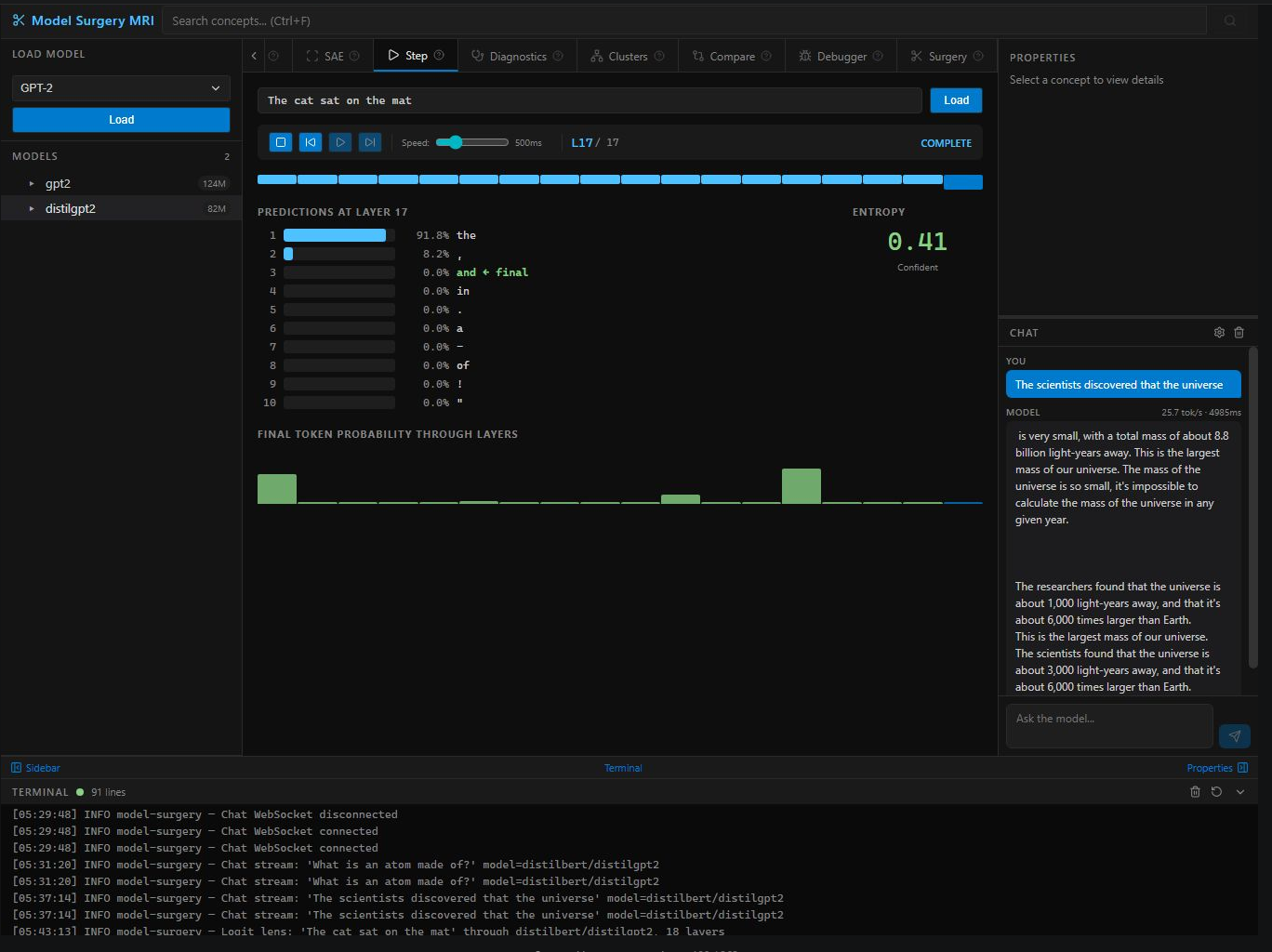

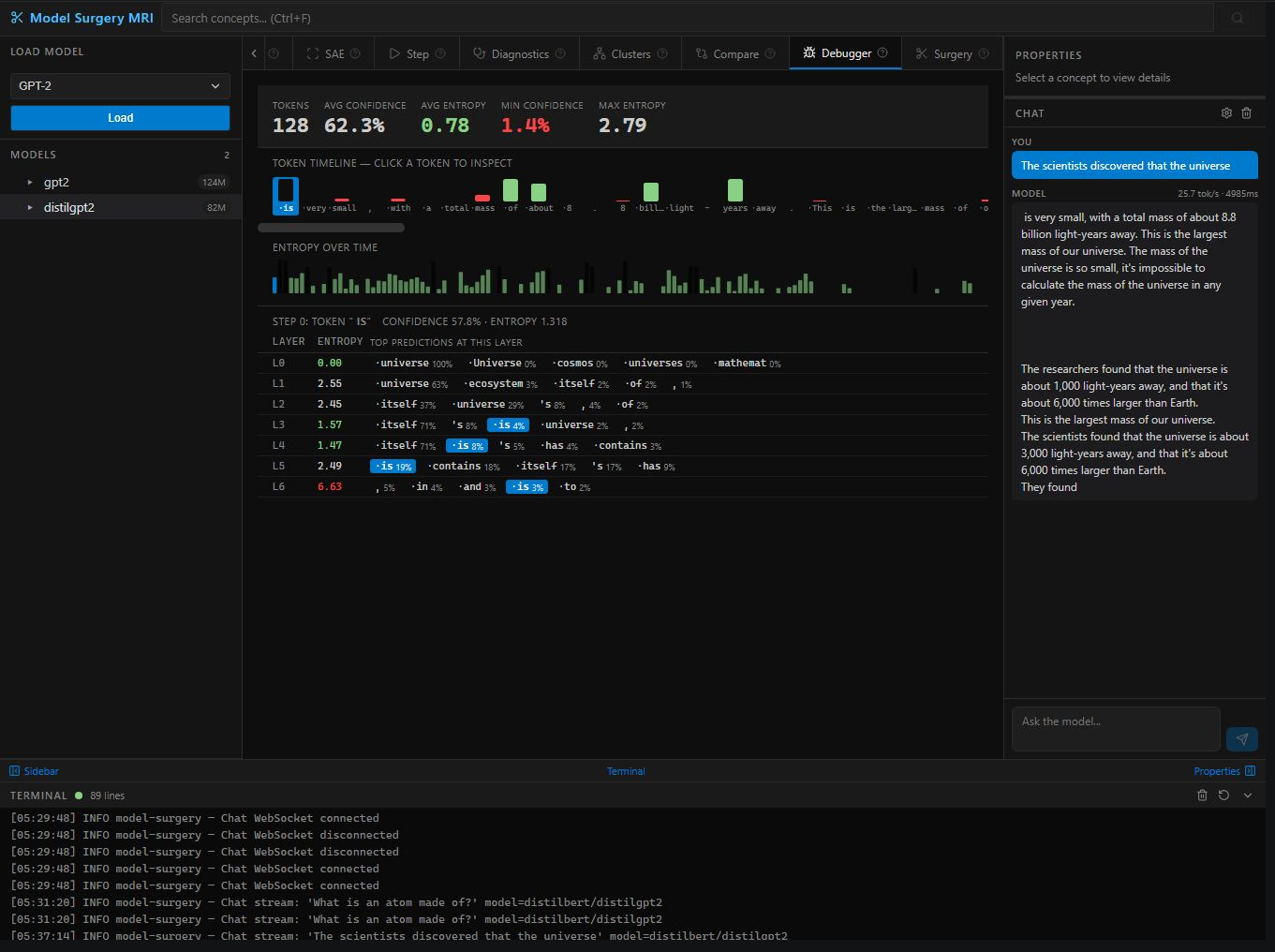

Watch a model think token-by-token. Confidence scores, entropy, and per-layer predictions for every generated token. Catch hallucinations at the layer they originate.

VCR controls to step through layers one at a time. Watch predictions form in slow motion — early layers guess, middle layers refine, final layers commit.

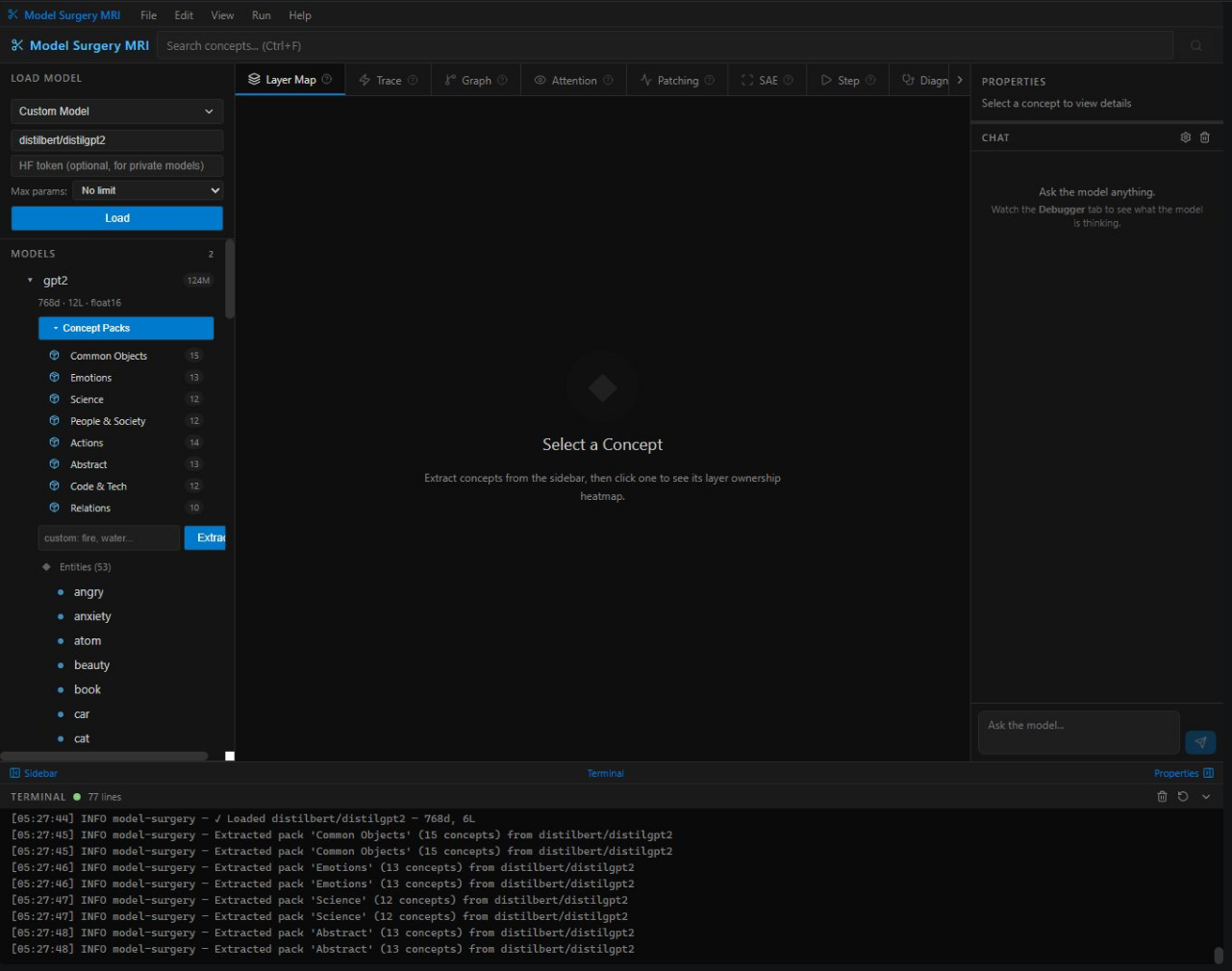

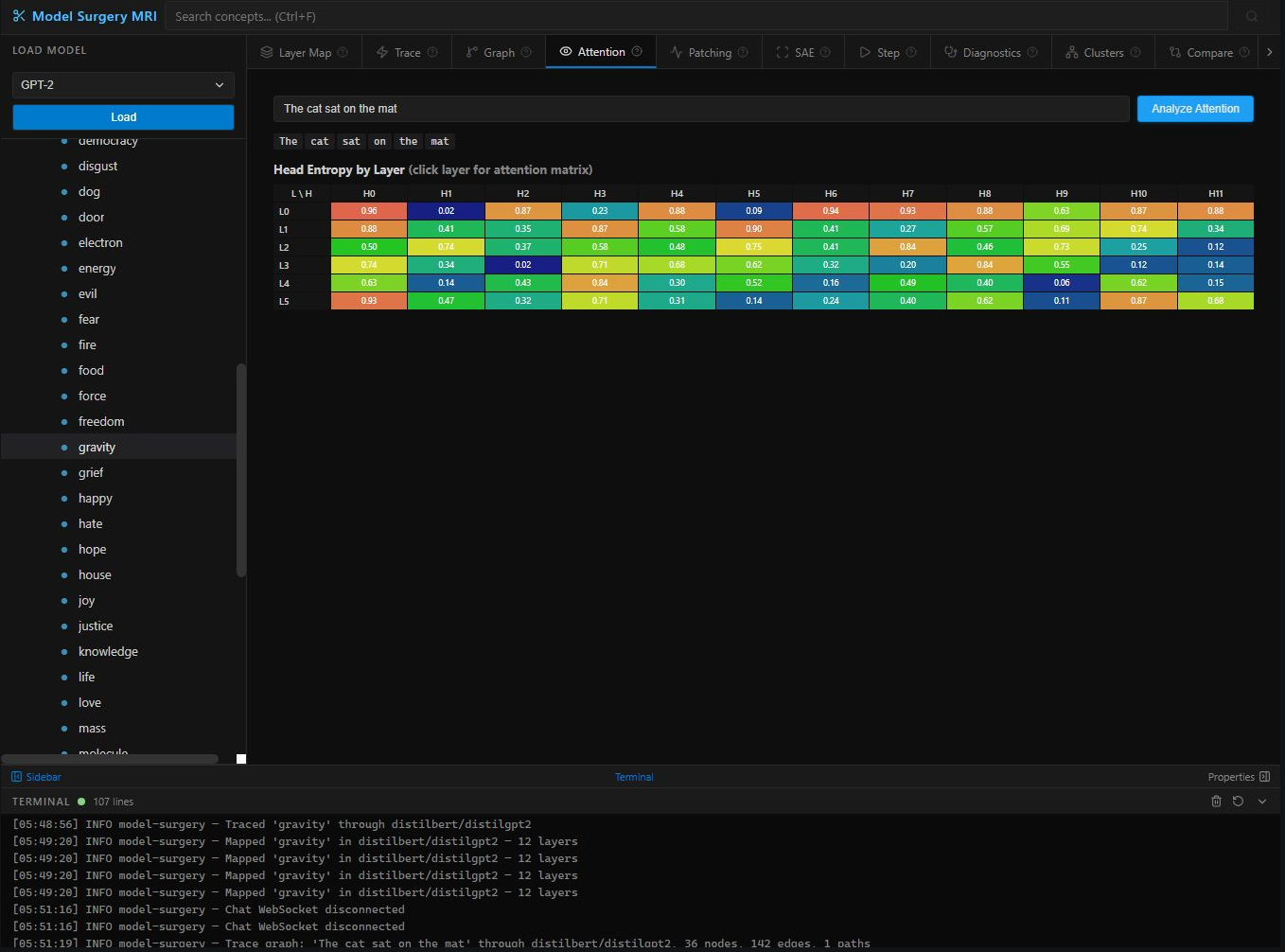

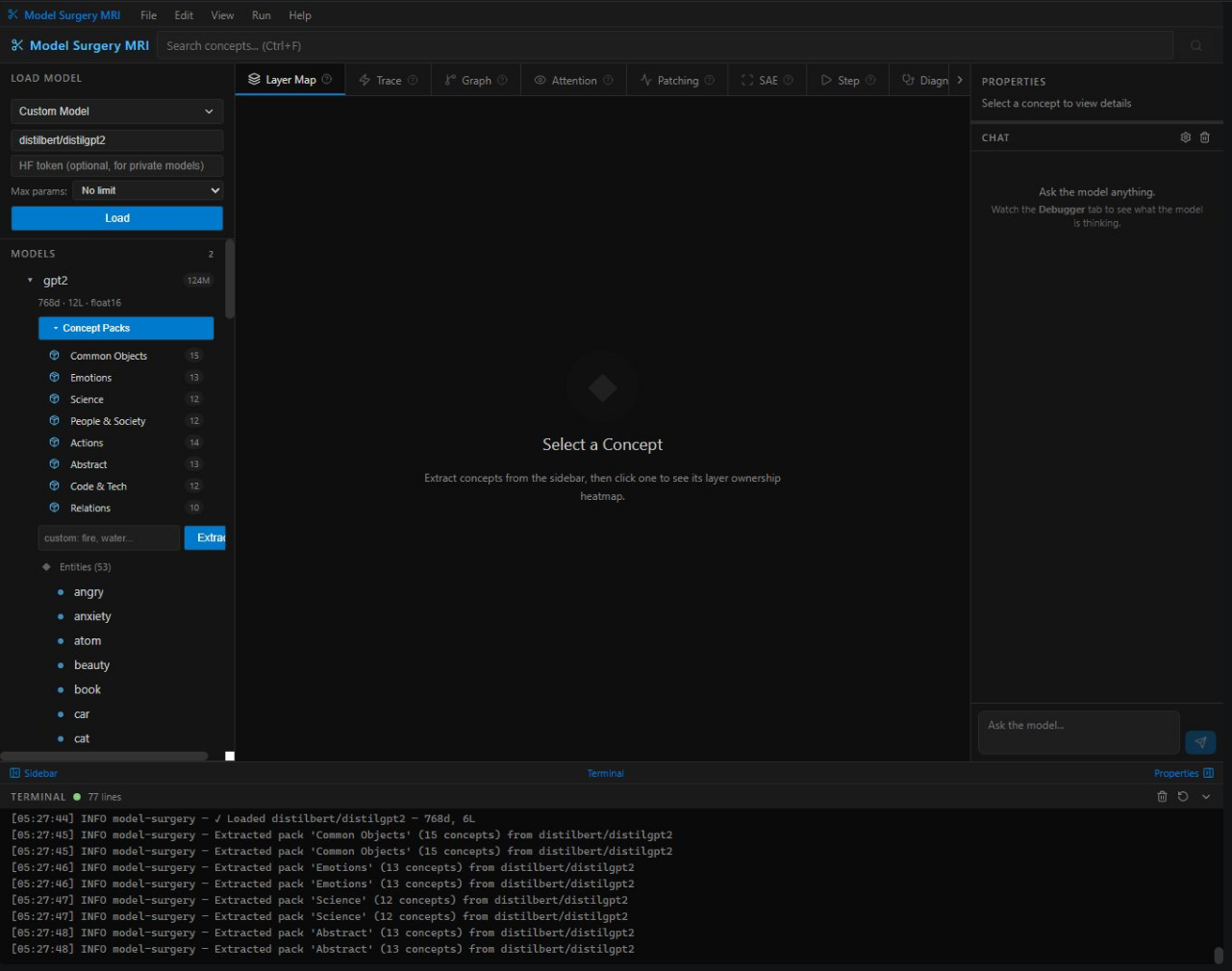

X-ray any concept across every weight matrix. Heatmap reveals exactly which layers encode which knowledge — answers where information lives inside a network.

Follow a concept flowing through every layer. Track meaning shifts, semantic transformations, and information propagation — like injecting dye into neural arteries.

Visual network of competing token predictions across all layers. Watch dozens of hypotheses compete — like seeing a chess player consider every possible move simultaneously.

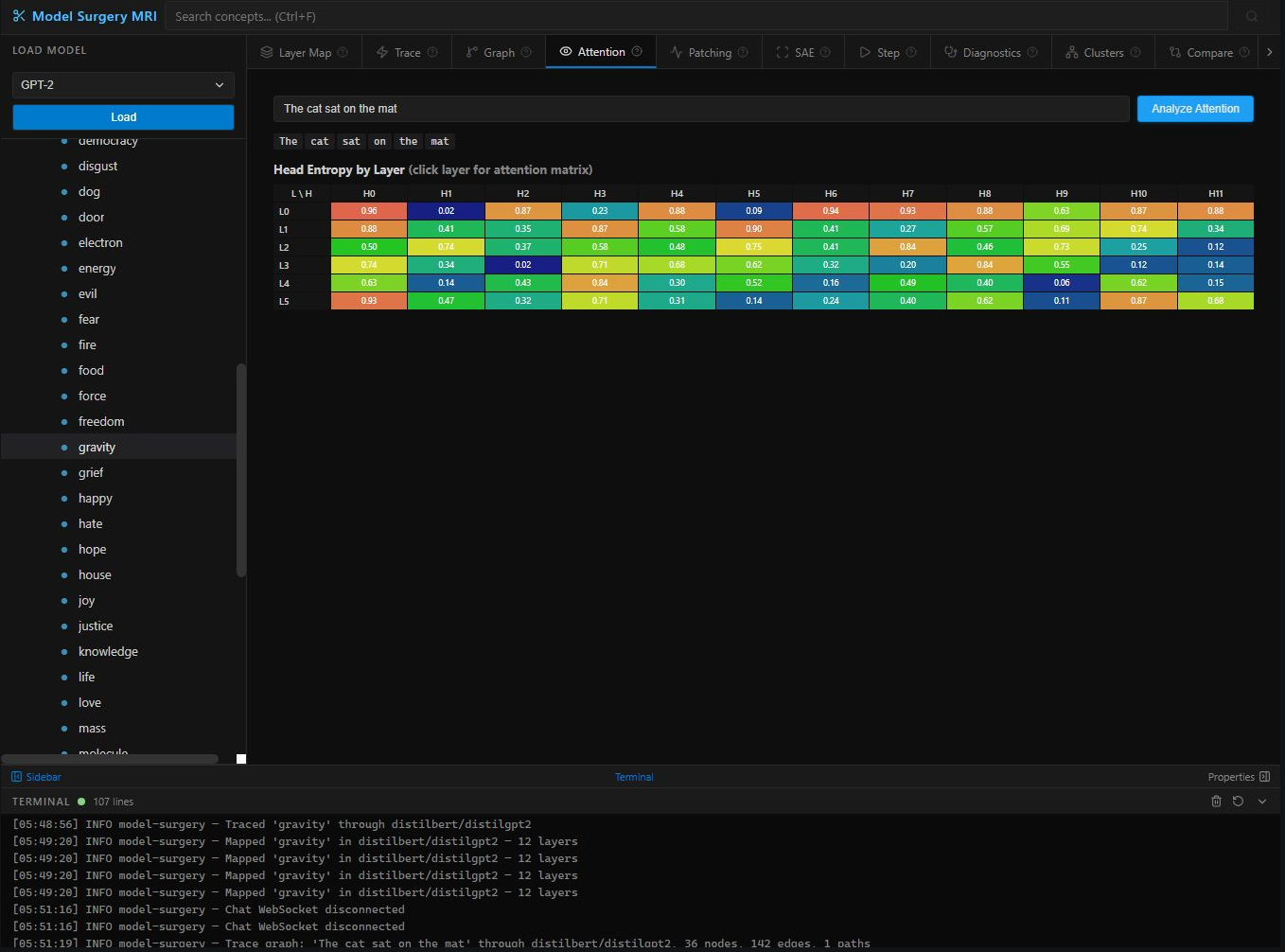

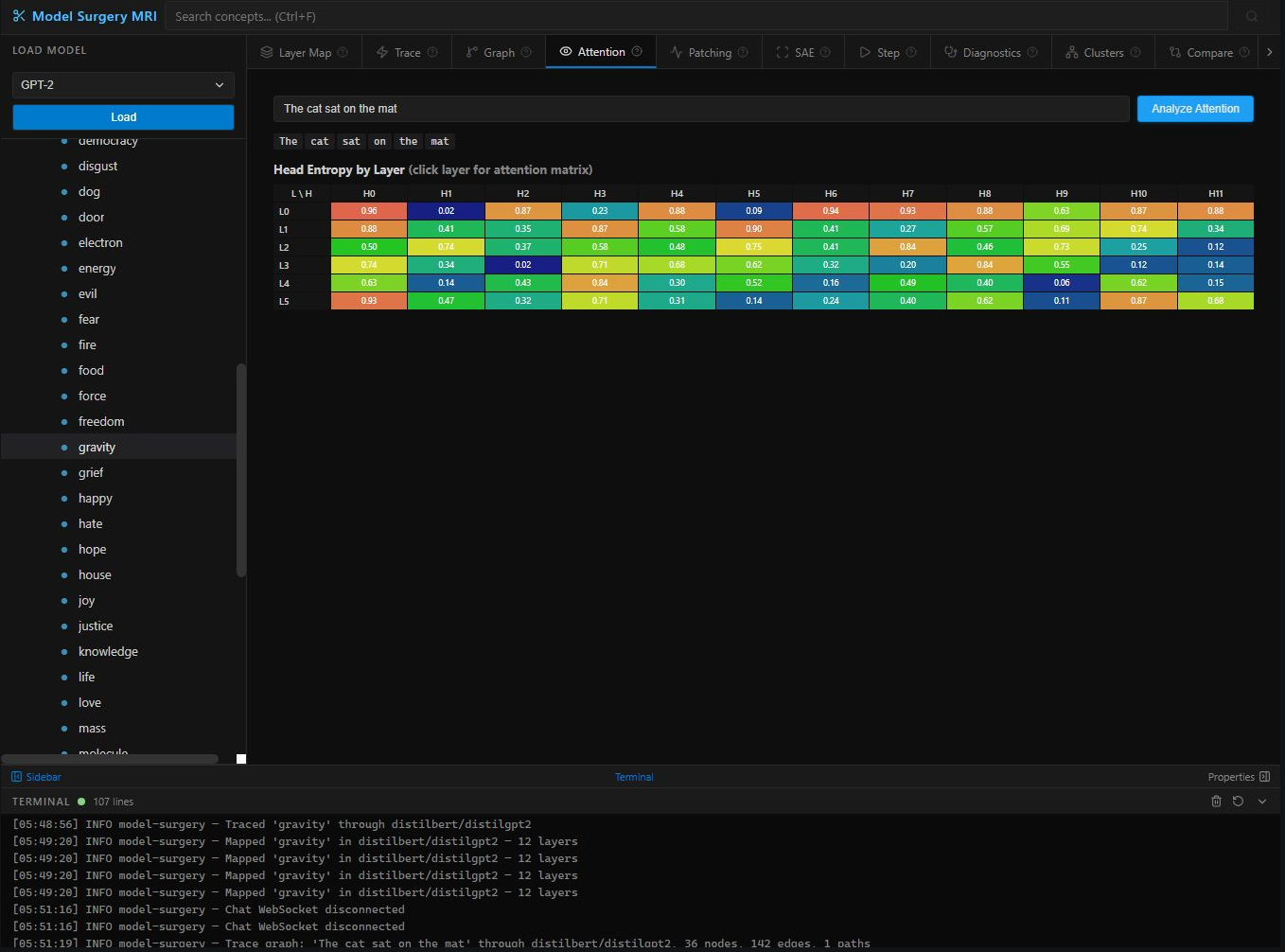

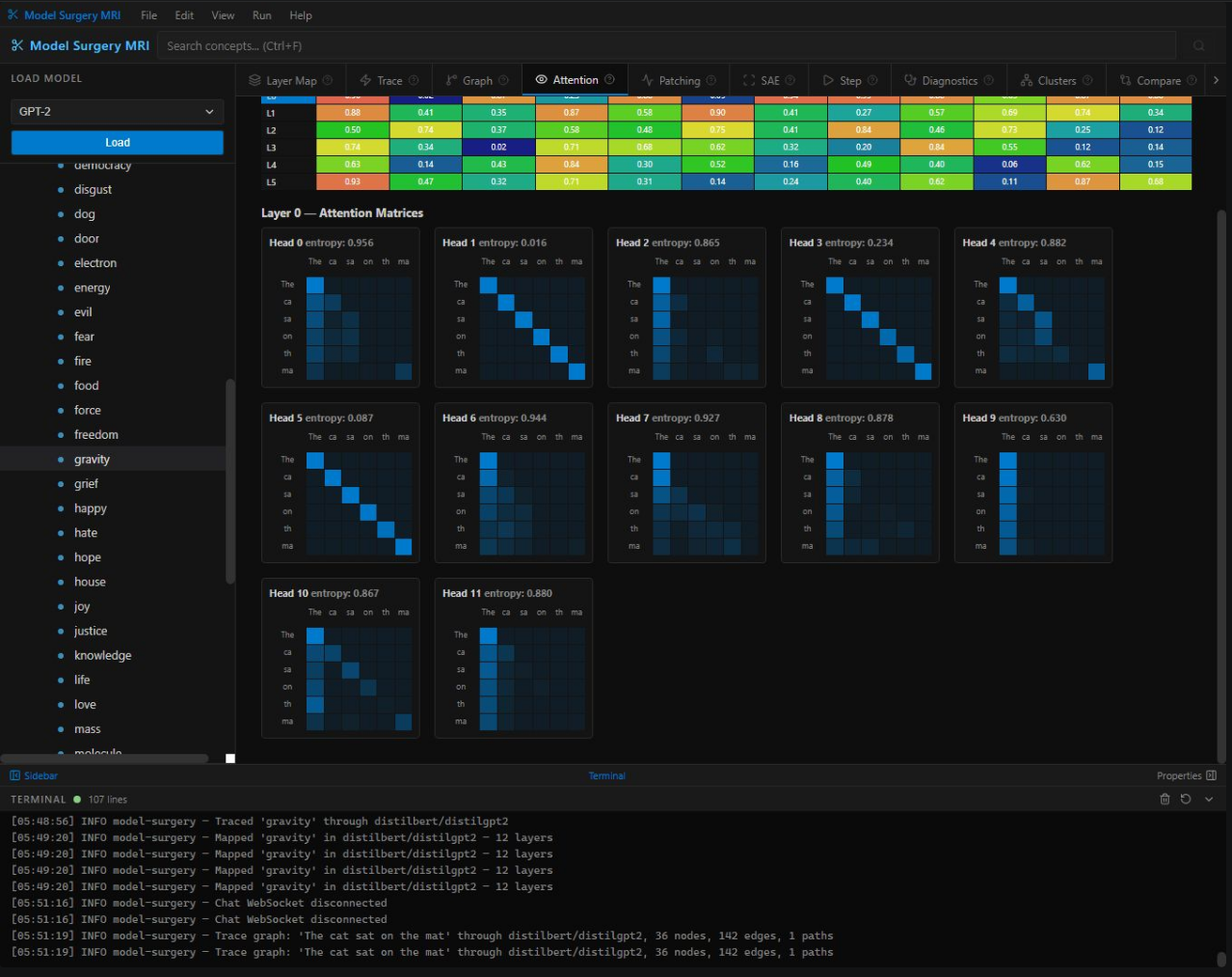

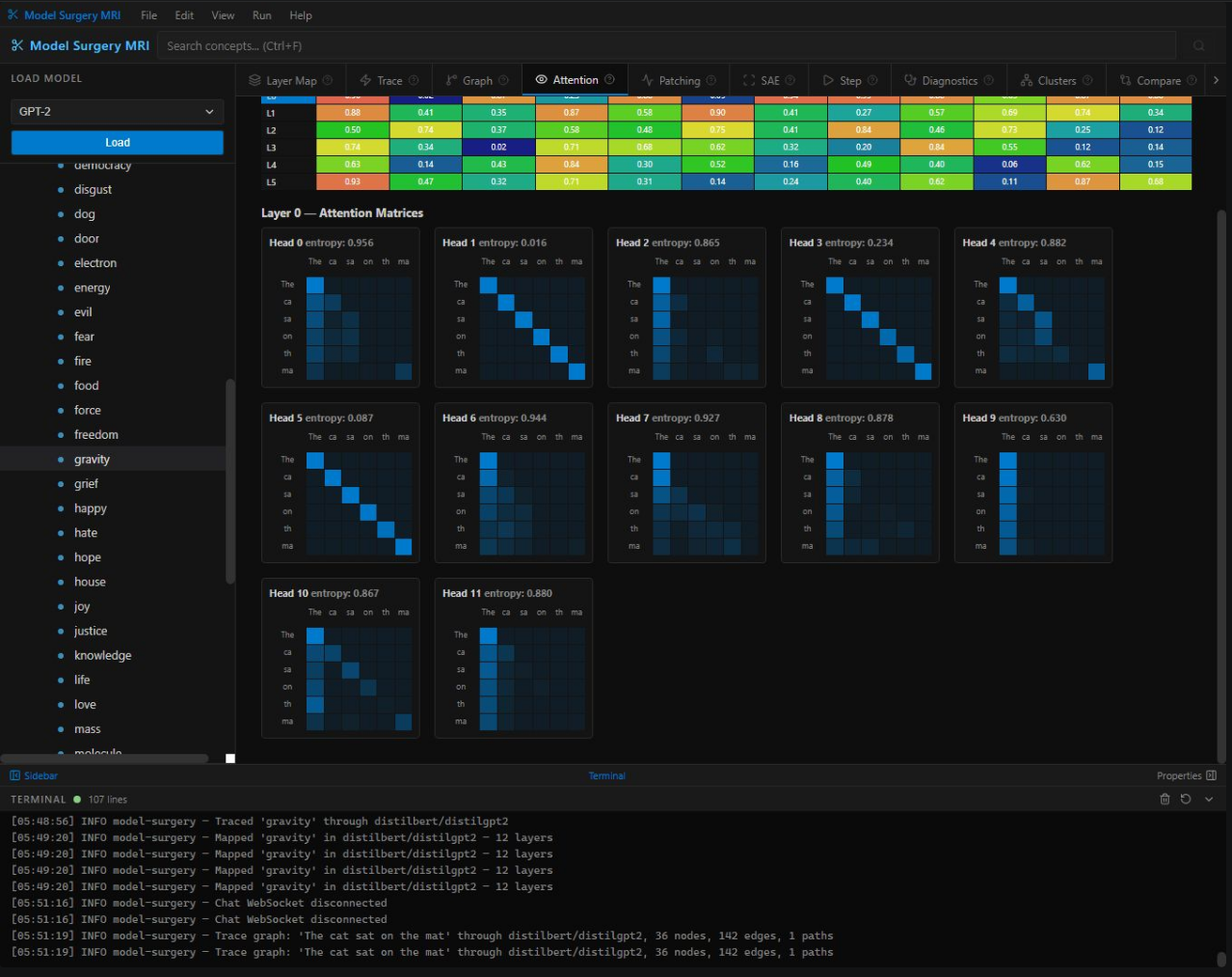

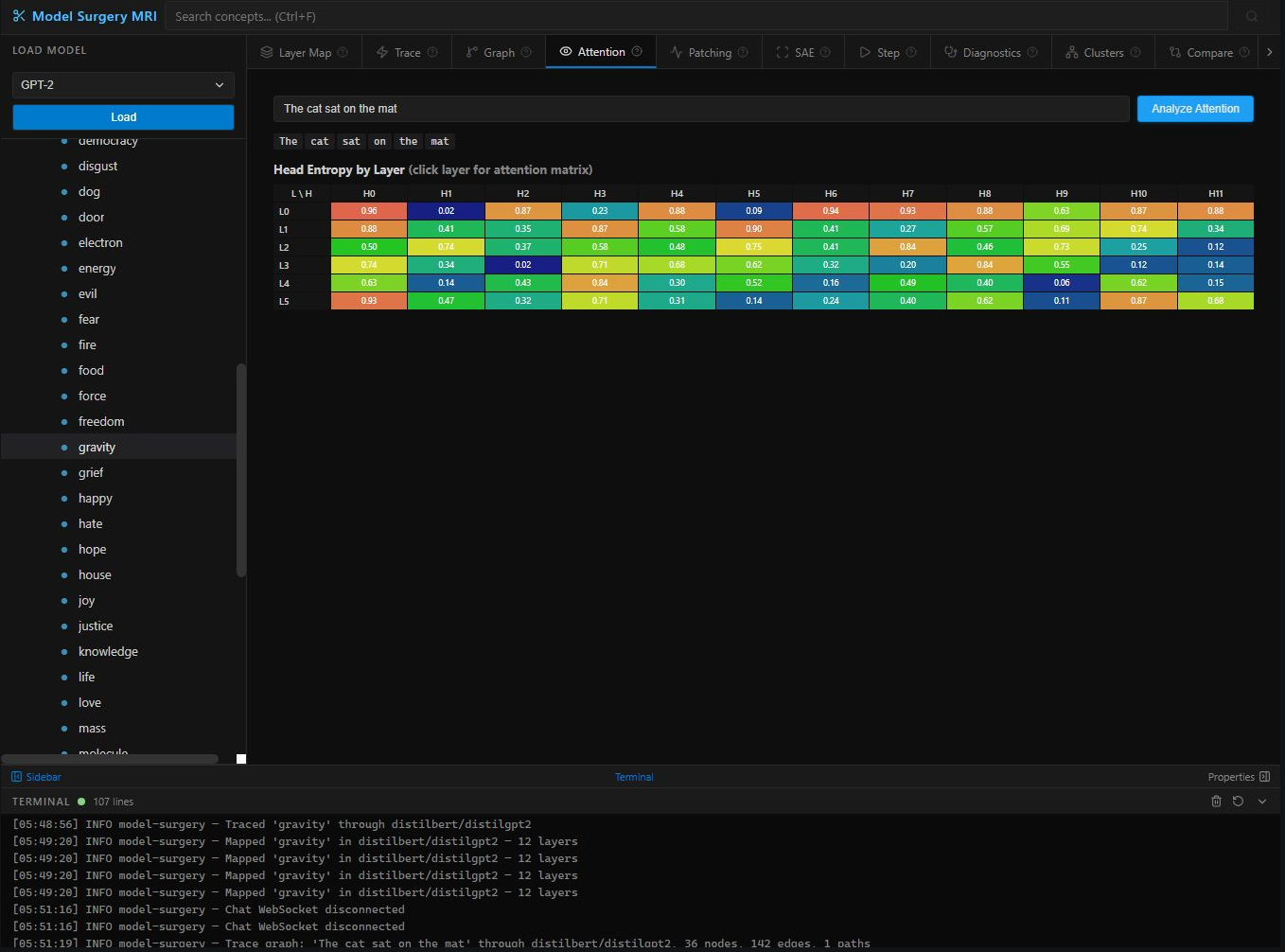

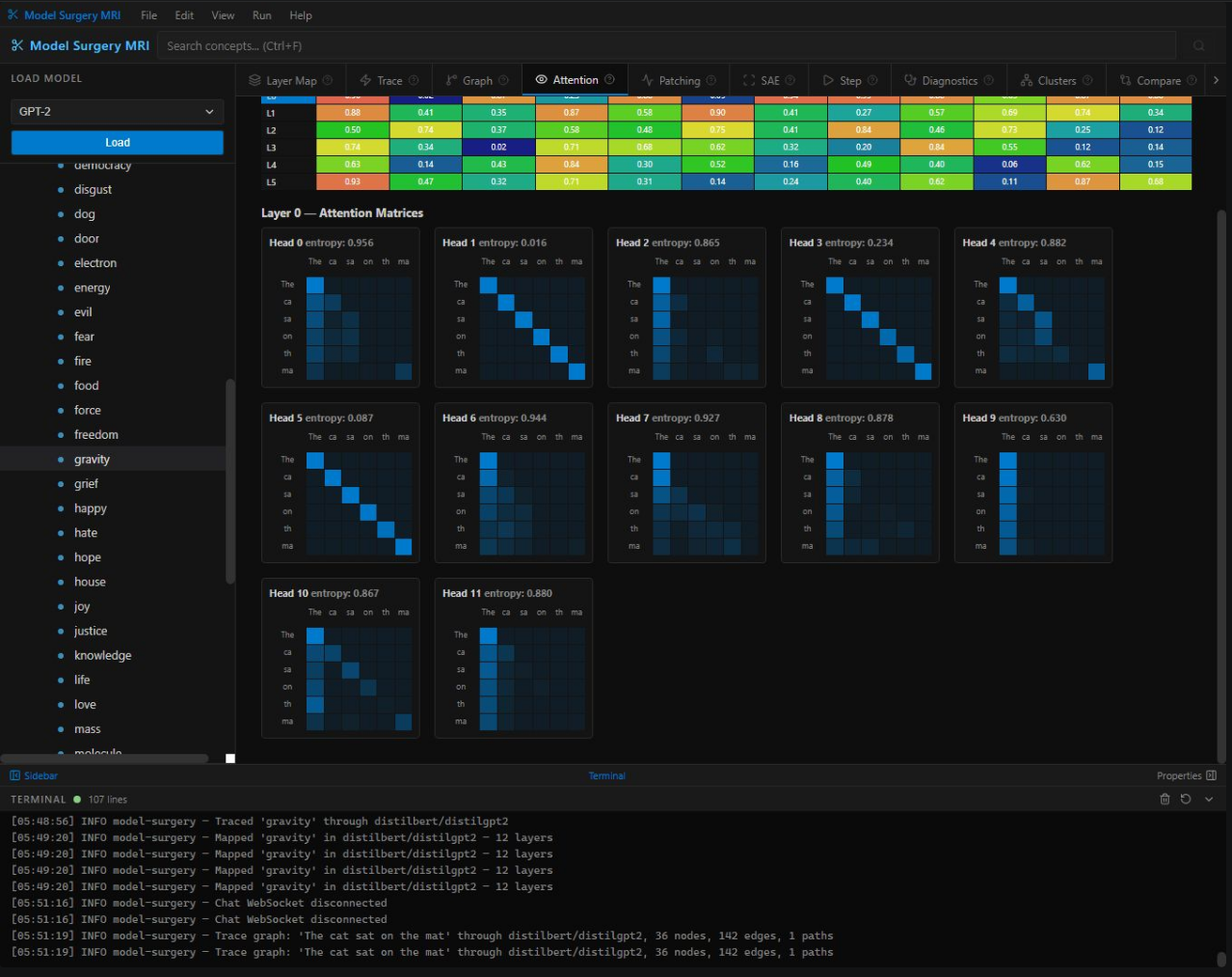

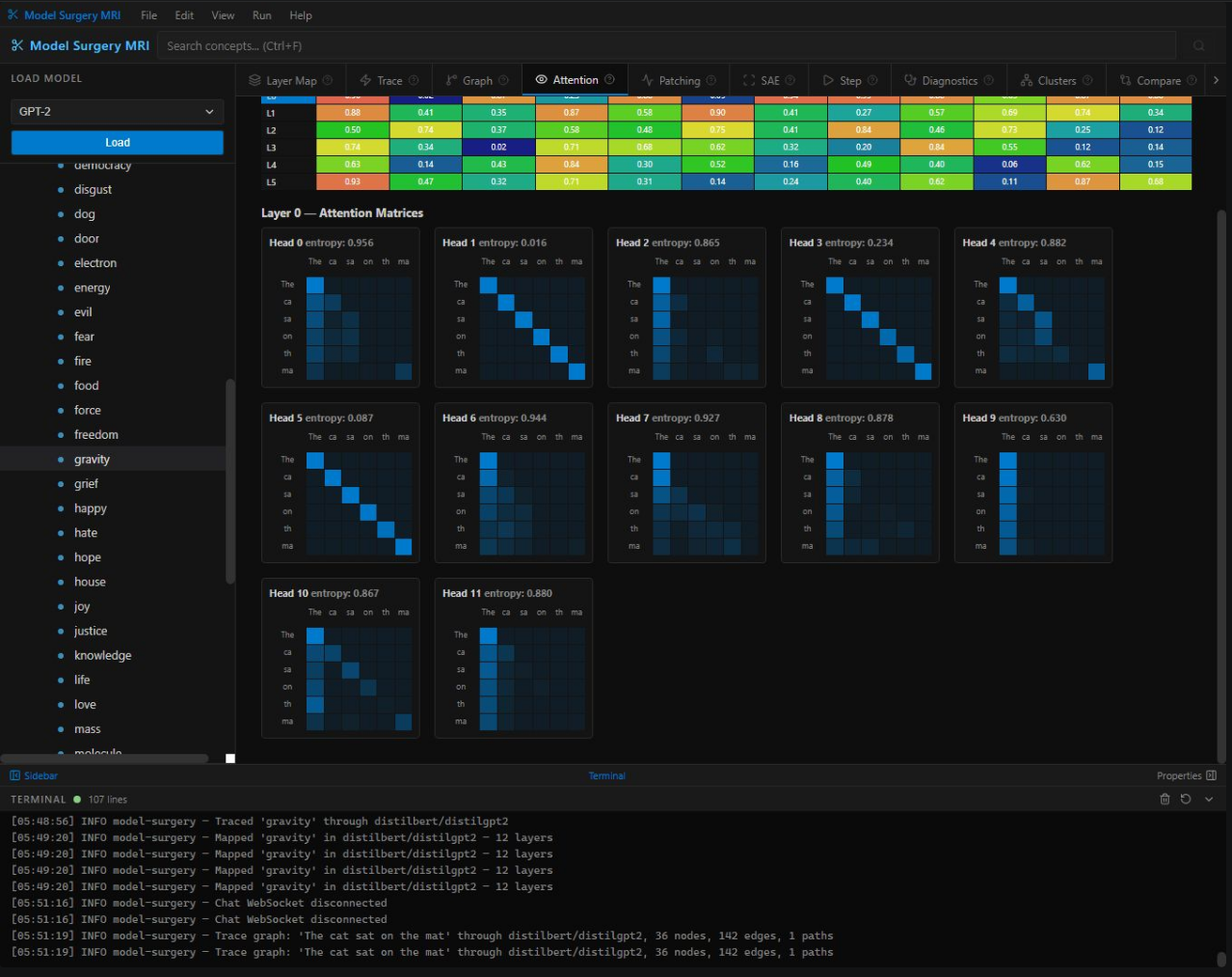

Entropy heatmaps + per-head attention matrices. See exactly which tokens attend to which — identify syntax heads, position heads, and redundant heads for pruning.

The gold standard from mechanistic interpretability. Corrupt specific layers and measure output divergence to find exactly where factual knowledge is stored.

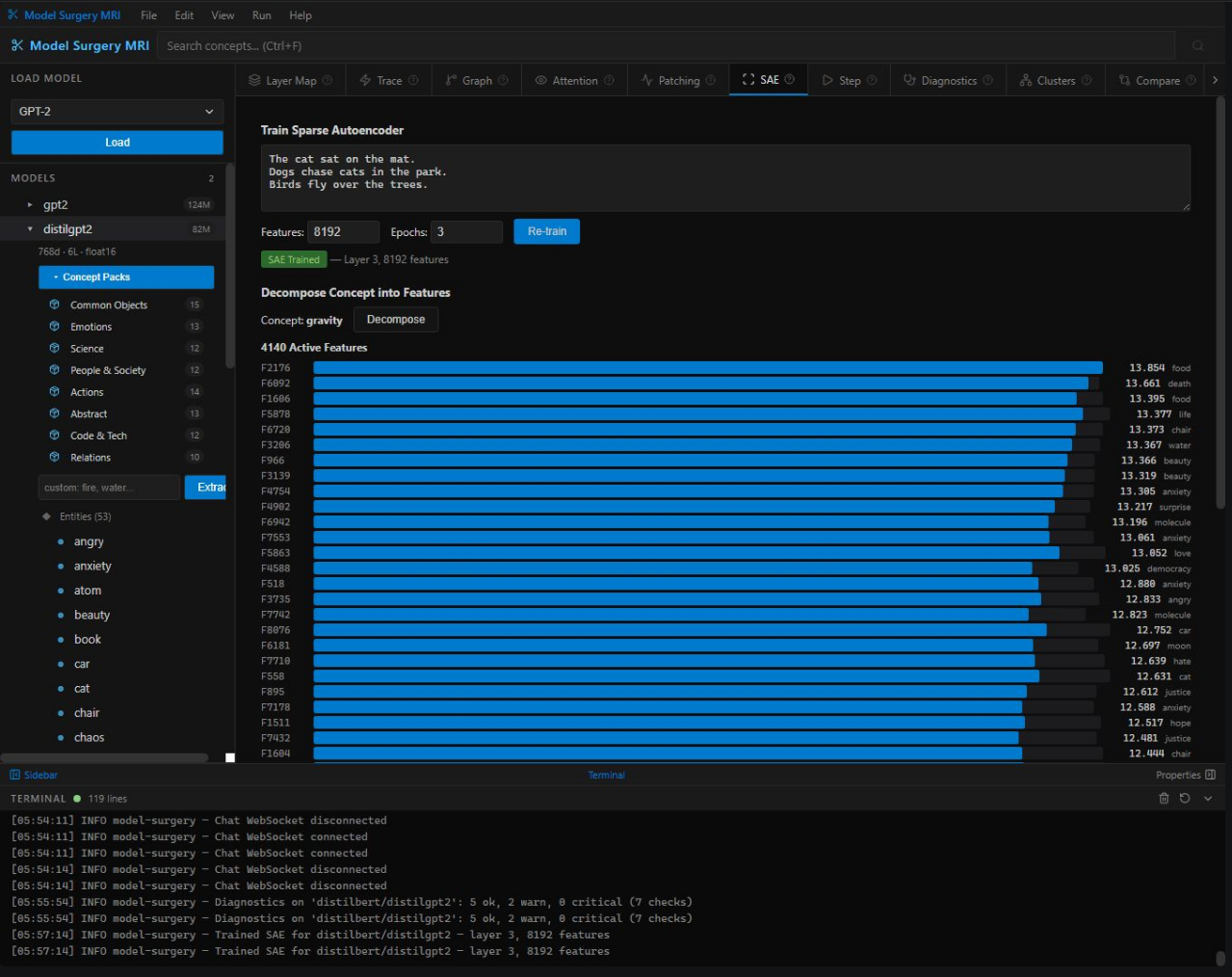

7-test automated health check: activation magnitude, token entropy, attention specialization, gradient flow, dead neurons, temporal regularity, contamination.

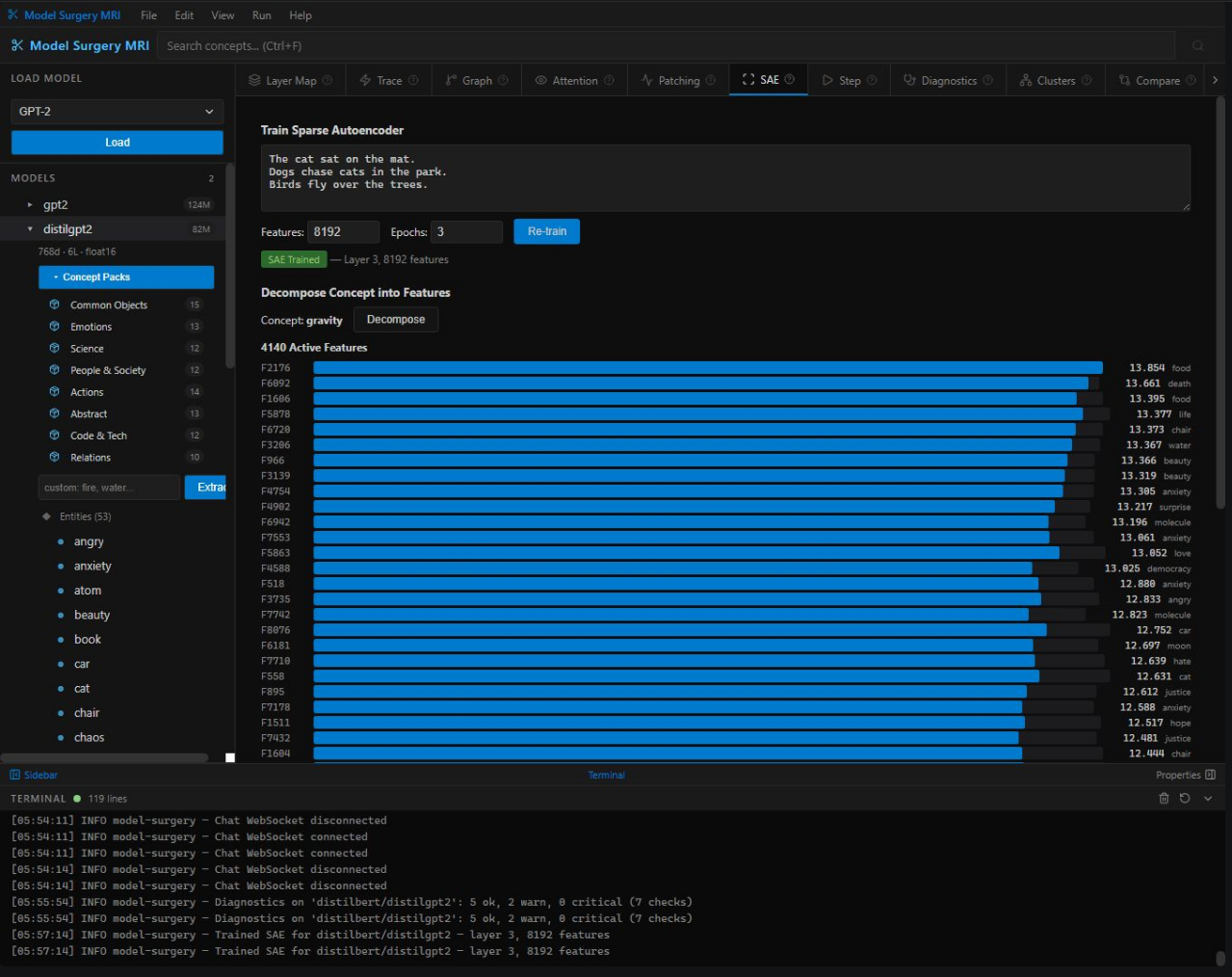

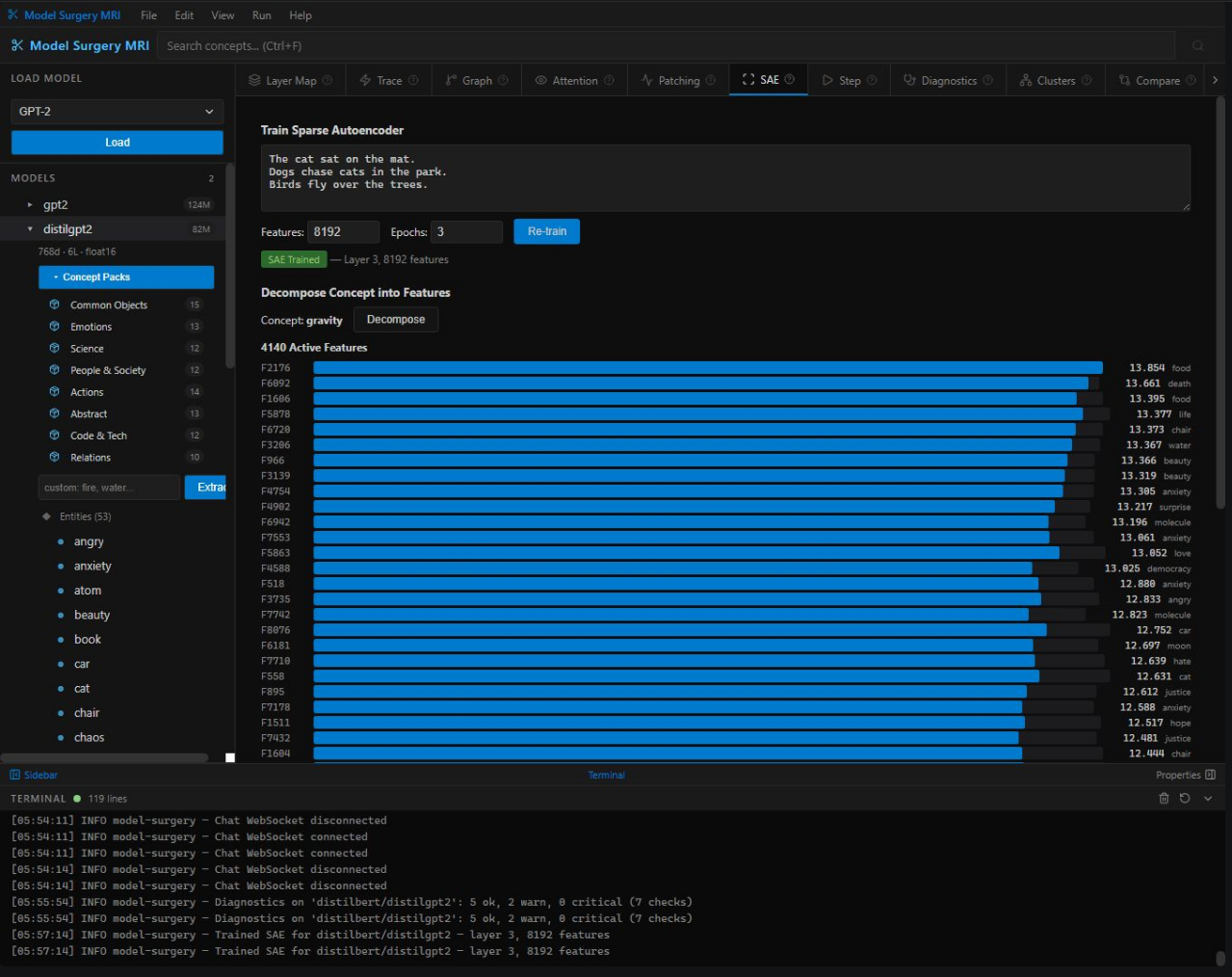

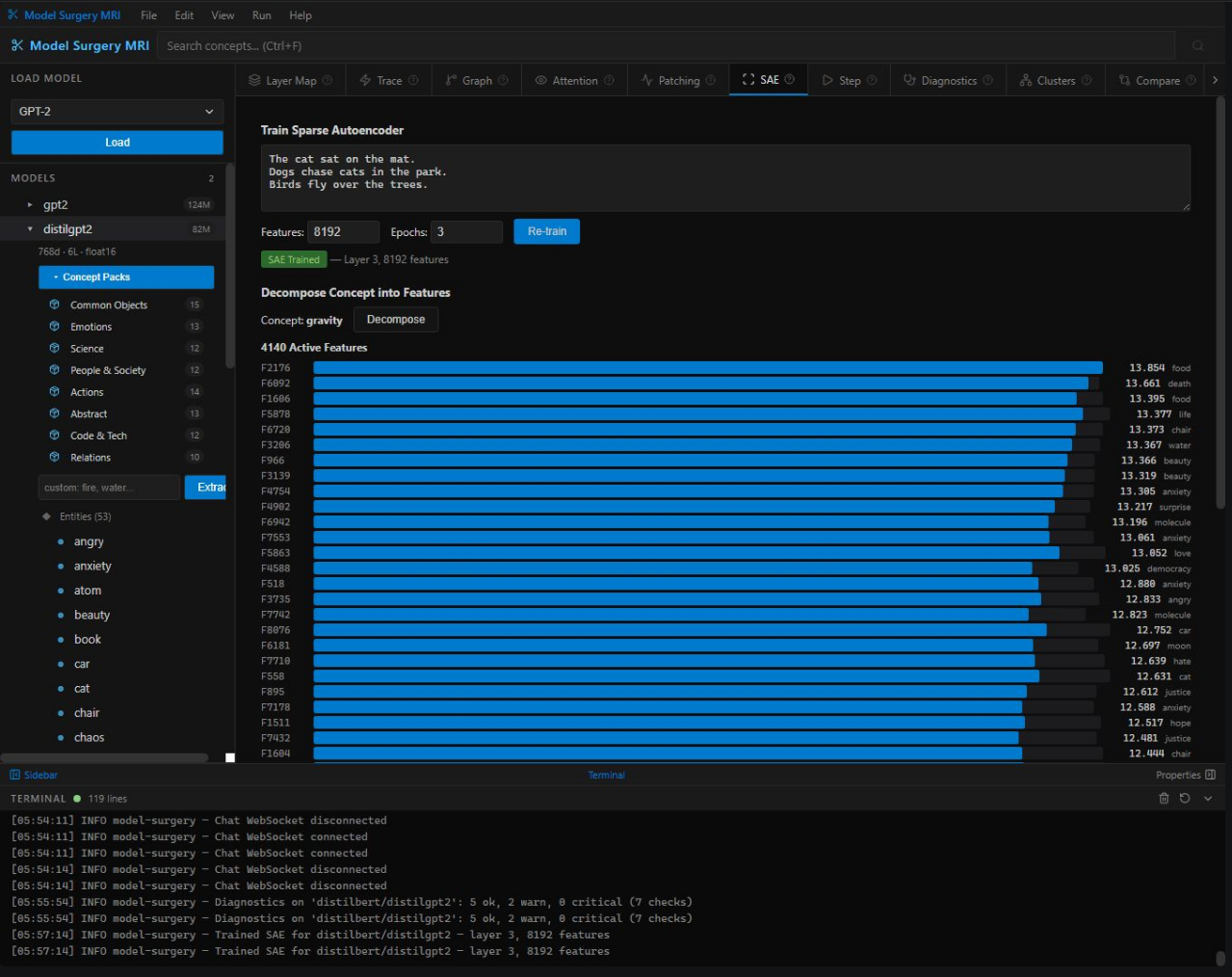

Train sparse autoencoders to decompose hidden activations into interpretable features. The same technique Anthropic uses to understand Claude — in a point-and-click interface.

Reveals how the model organizes knowledge internally. Concepts grouped by representational similarity — the model's internal ontology made visible.

Side-by-side diagnostics + concept overlap between two models. Essential for distillation teams: see exactly what knowledge was lost during compression.

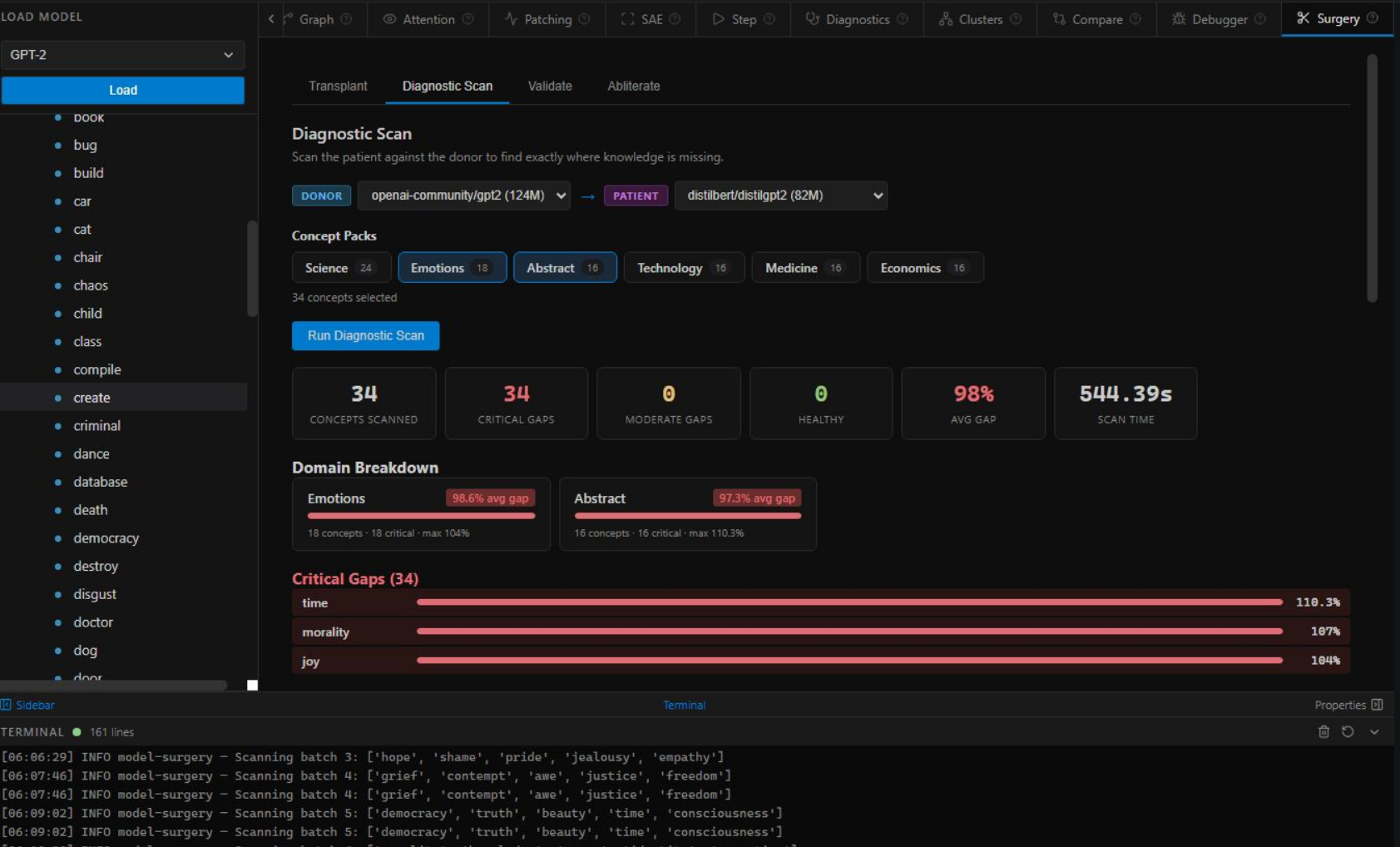

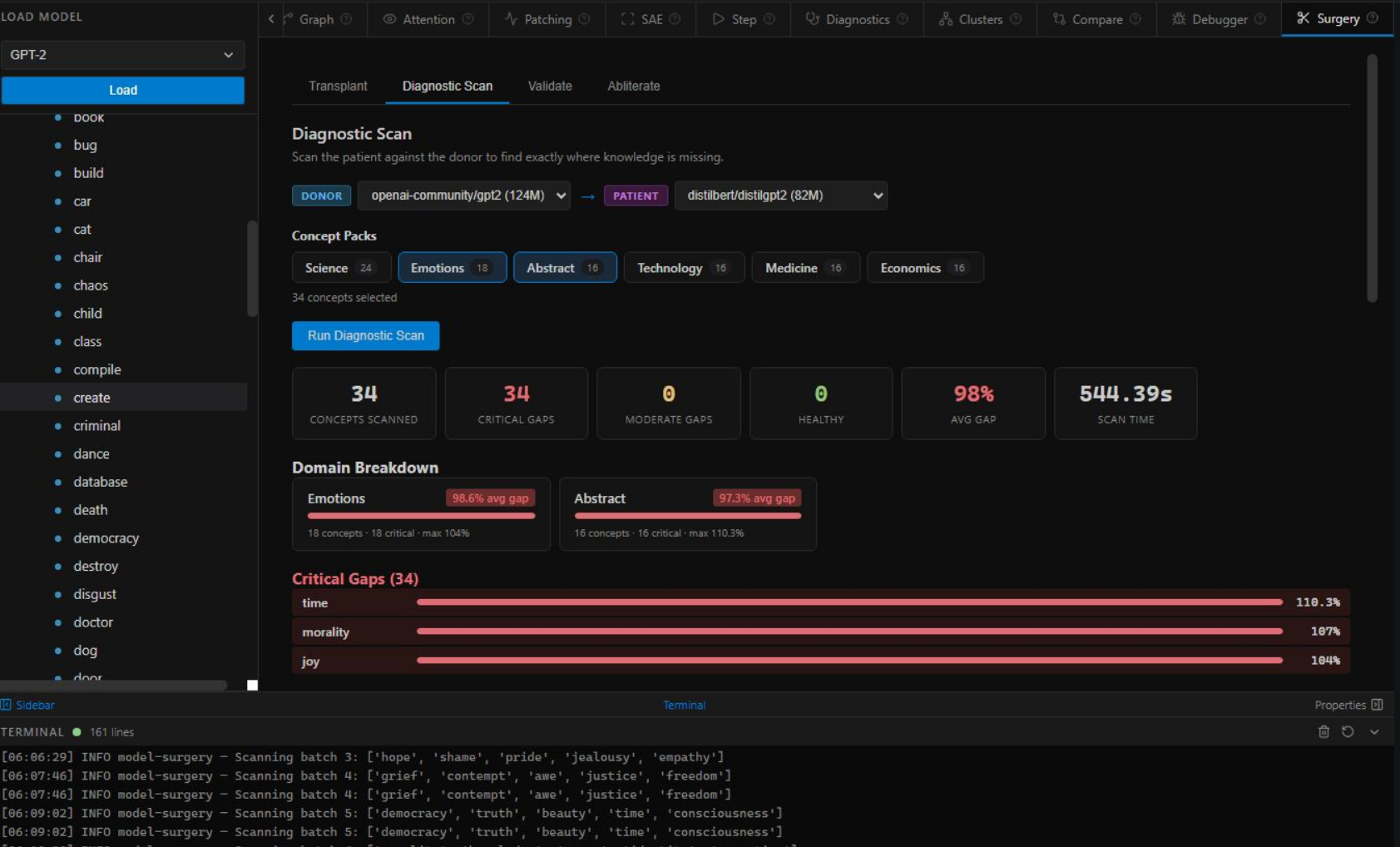

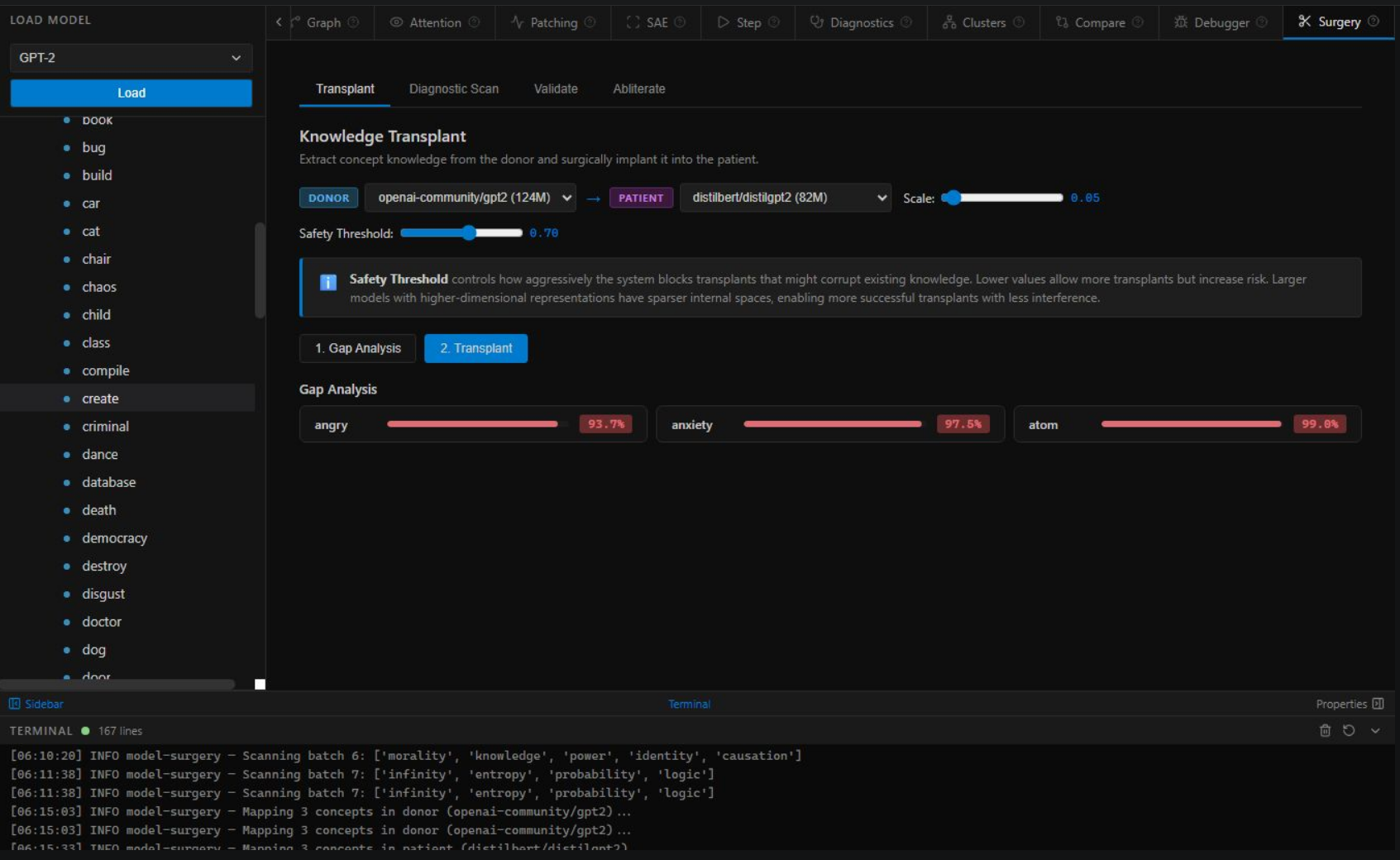

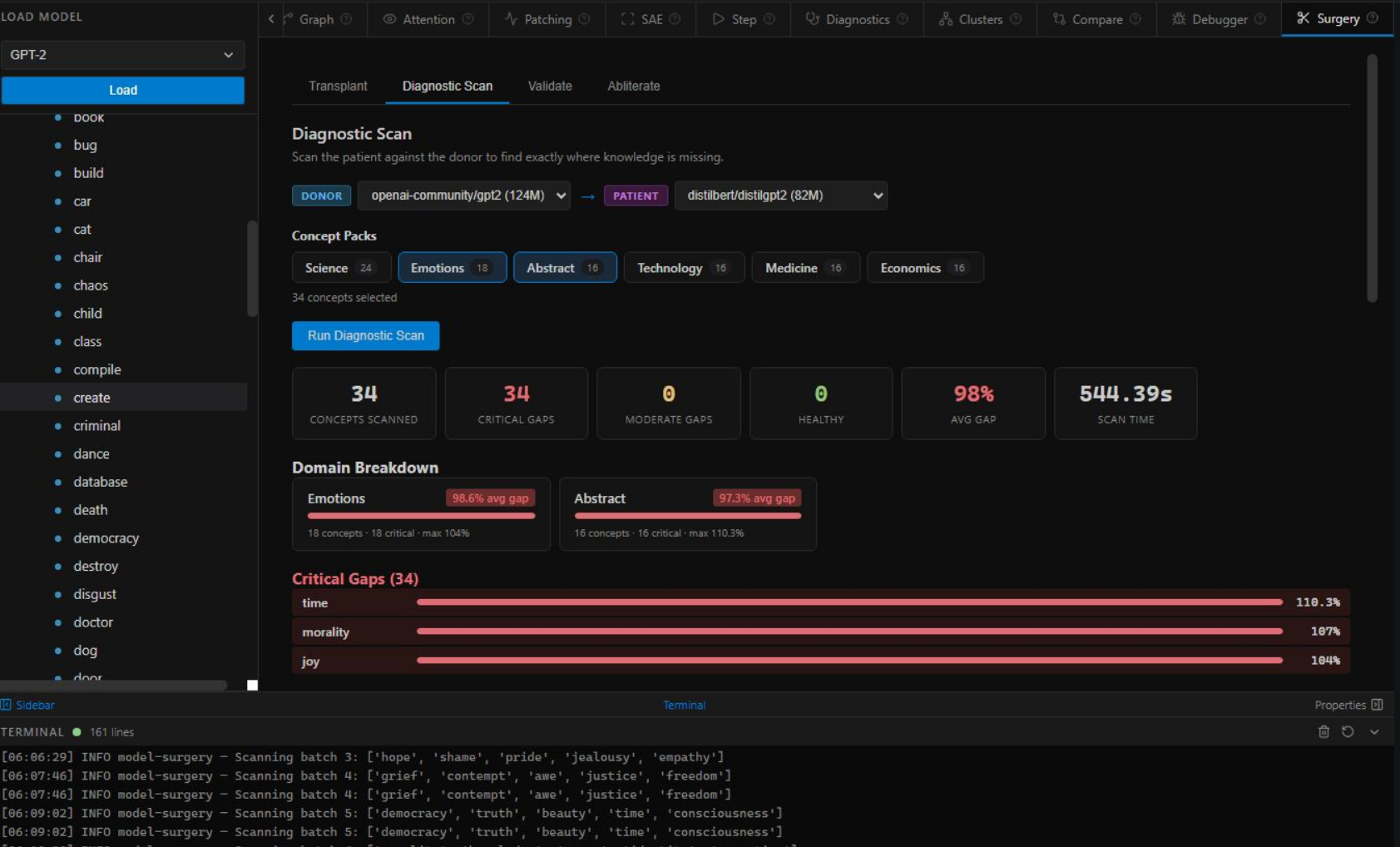

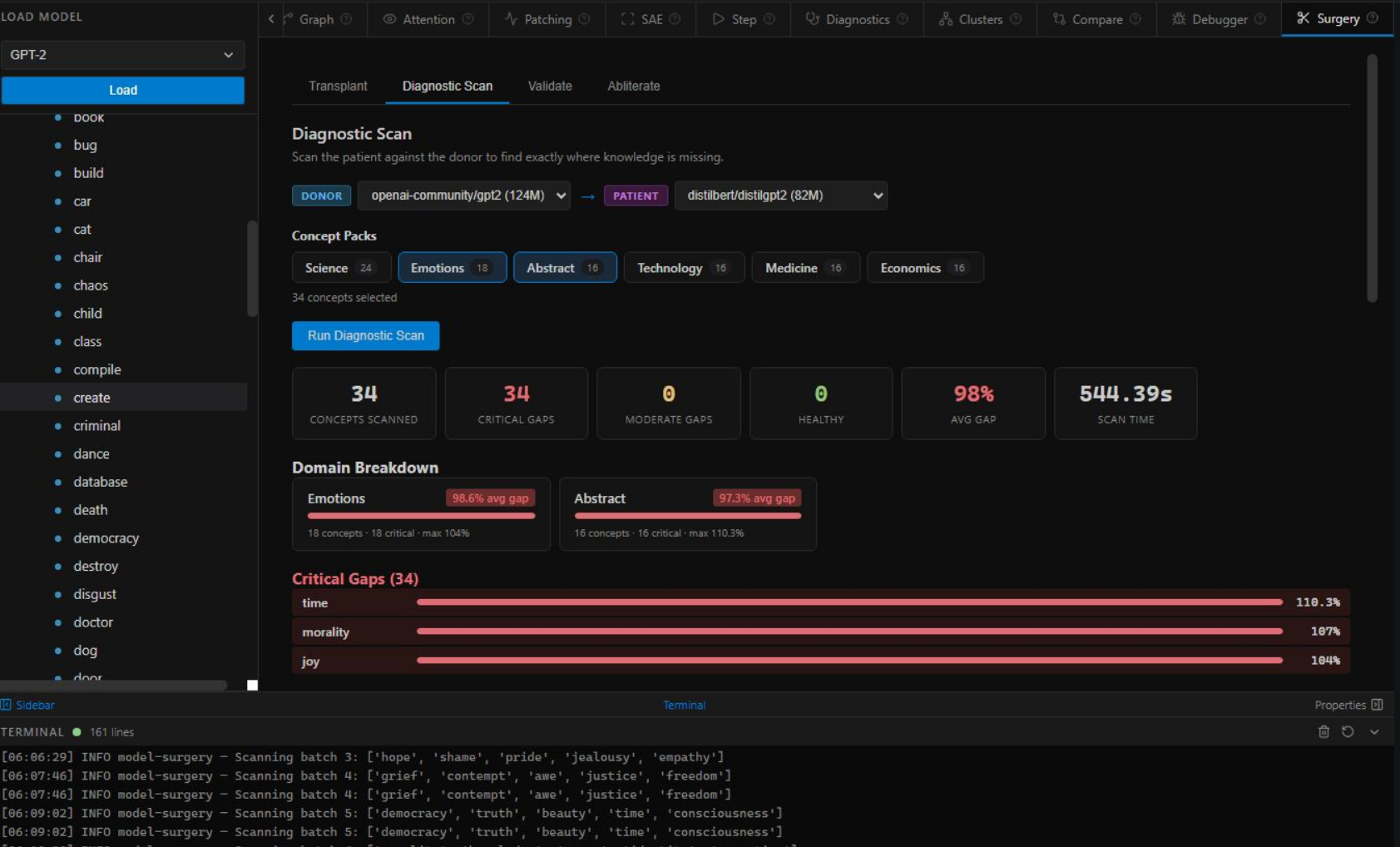

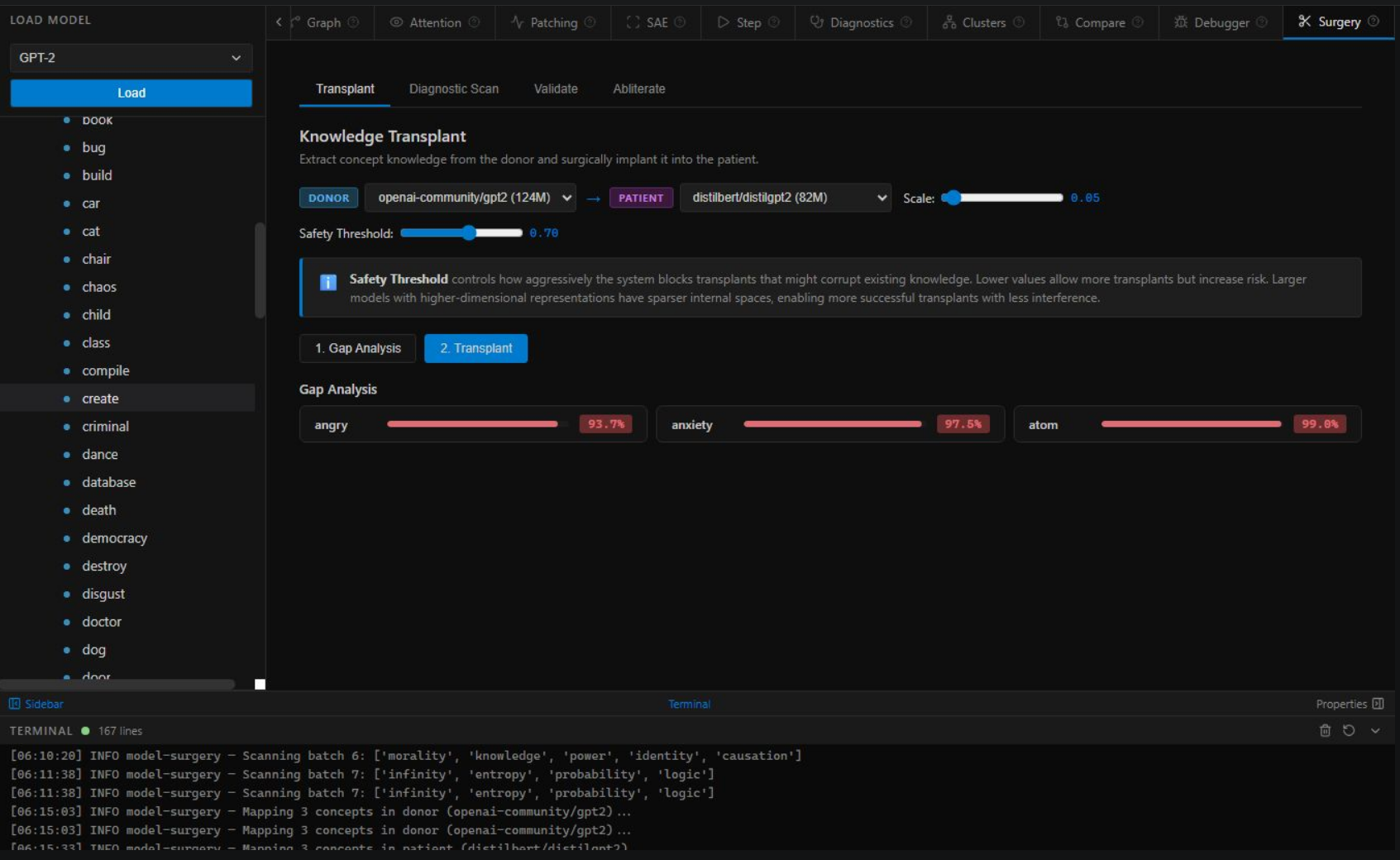

Full-body MRI. Scan entire concept packs and measure knowledge gaps between donor and patient models. Prioritize surgery targets with quantitative gap percentages.

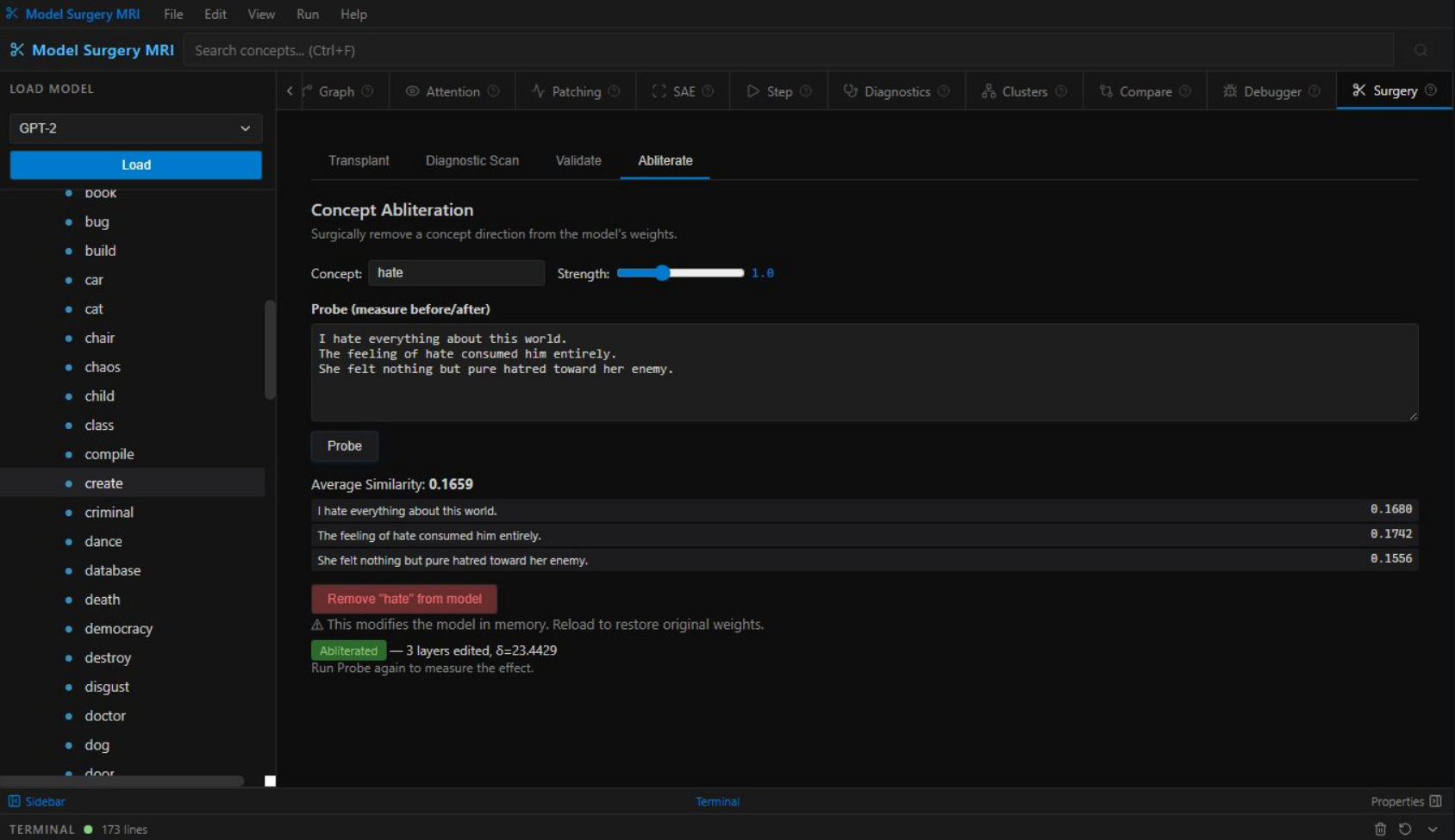

Extract concept representations from a donor model and inject them into the patient. Safety system detects interference and blocks dangerous transfers automatically.

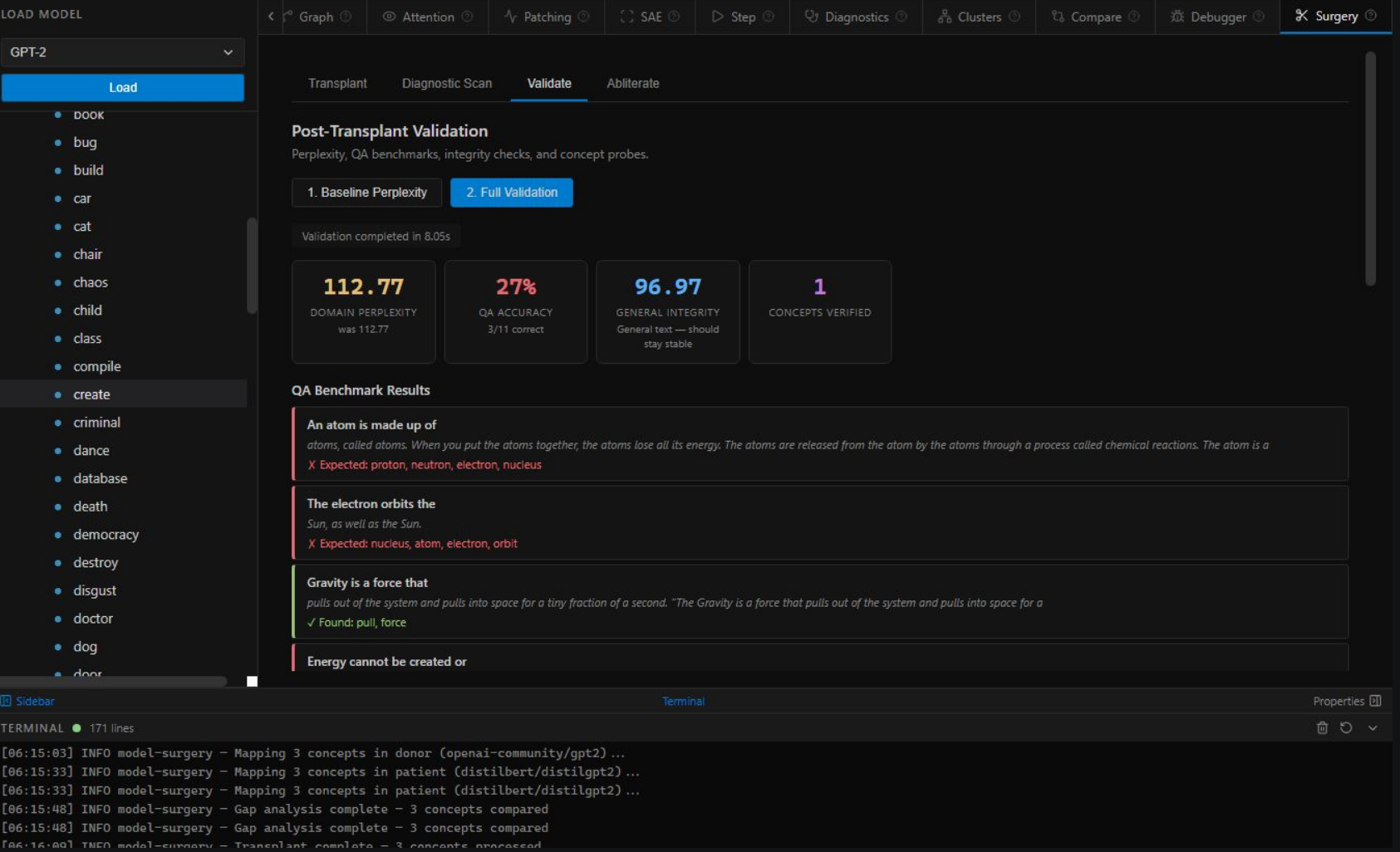

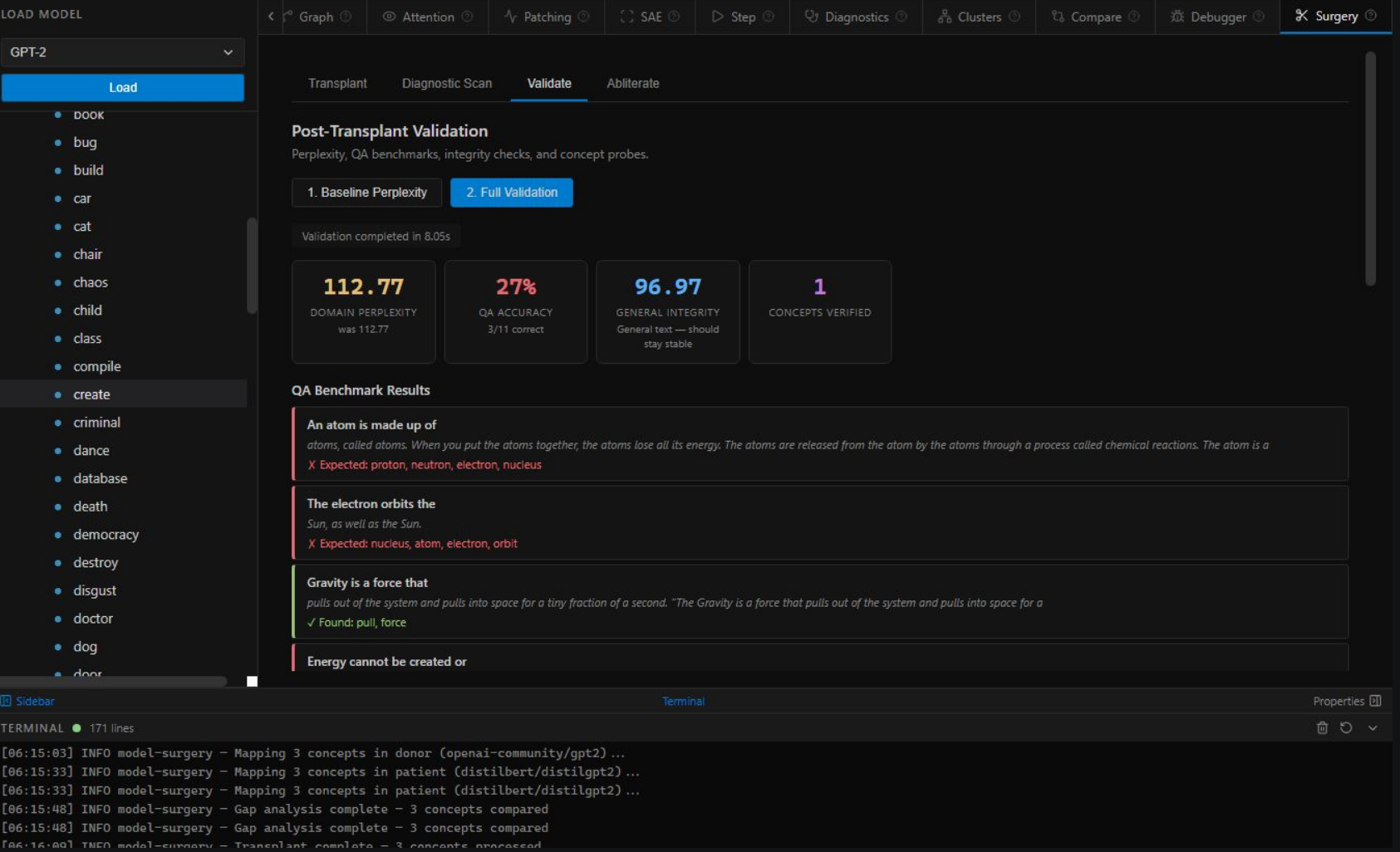

Post-operative verification: domain perplexity (coherence), QA accuracy (knowledge works), general integrity (no damage). Every surgery gets verified.

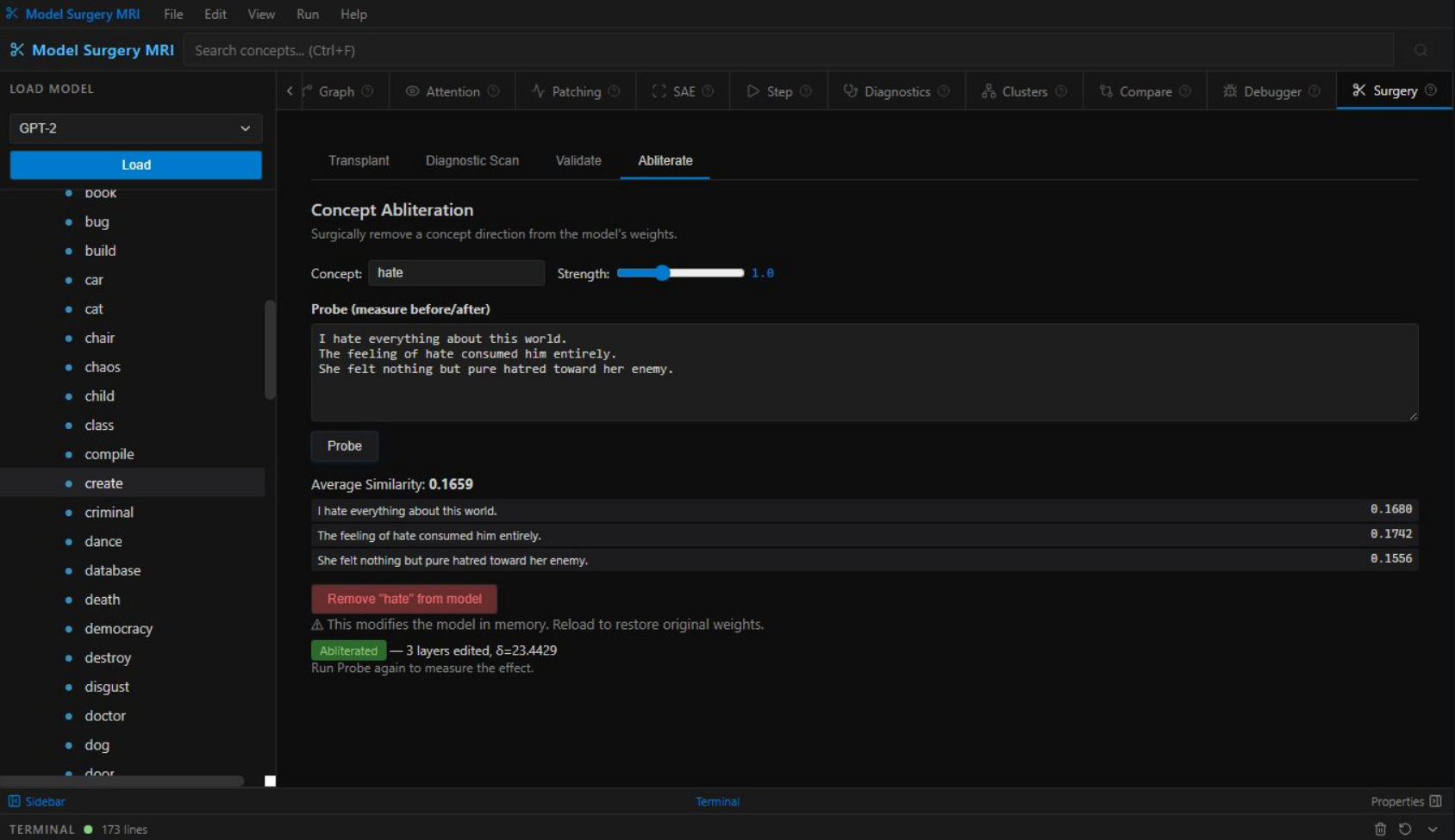

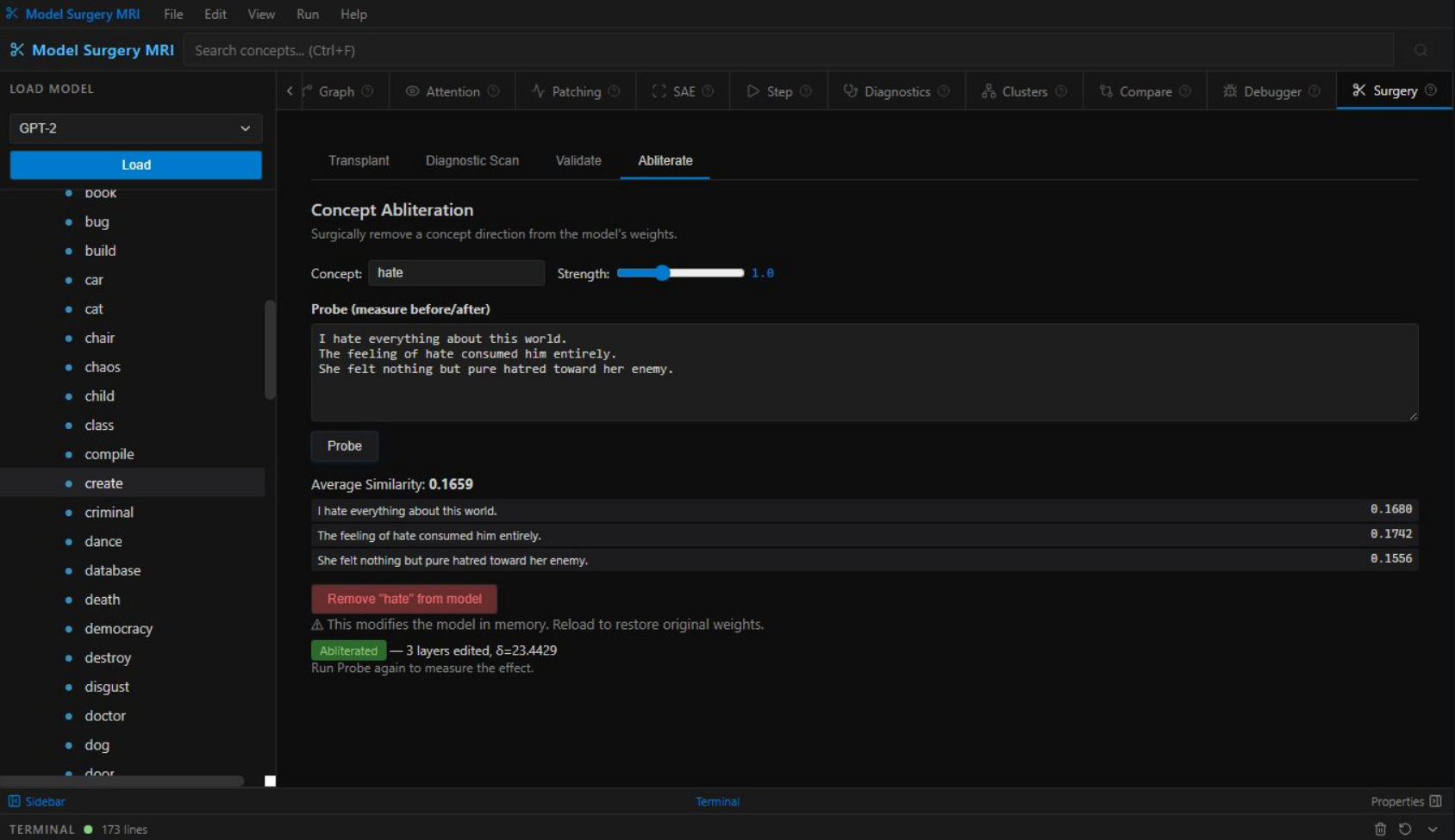

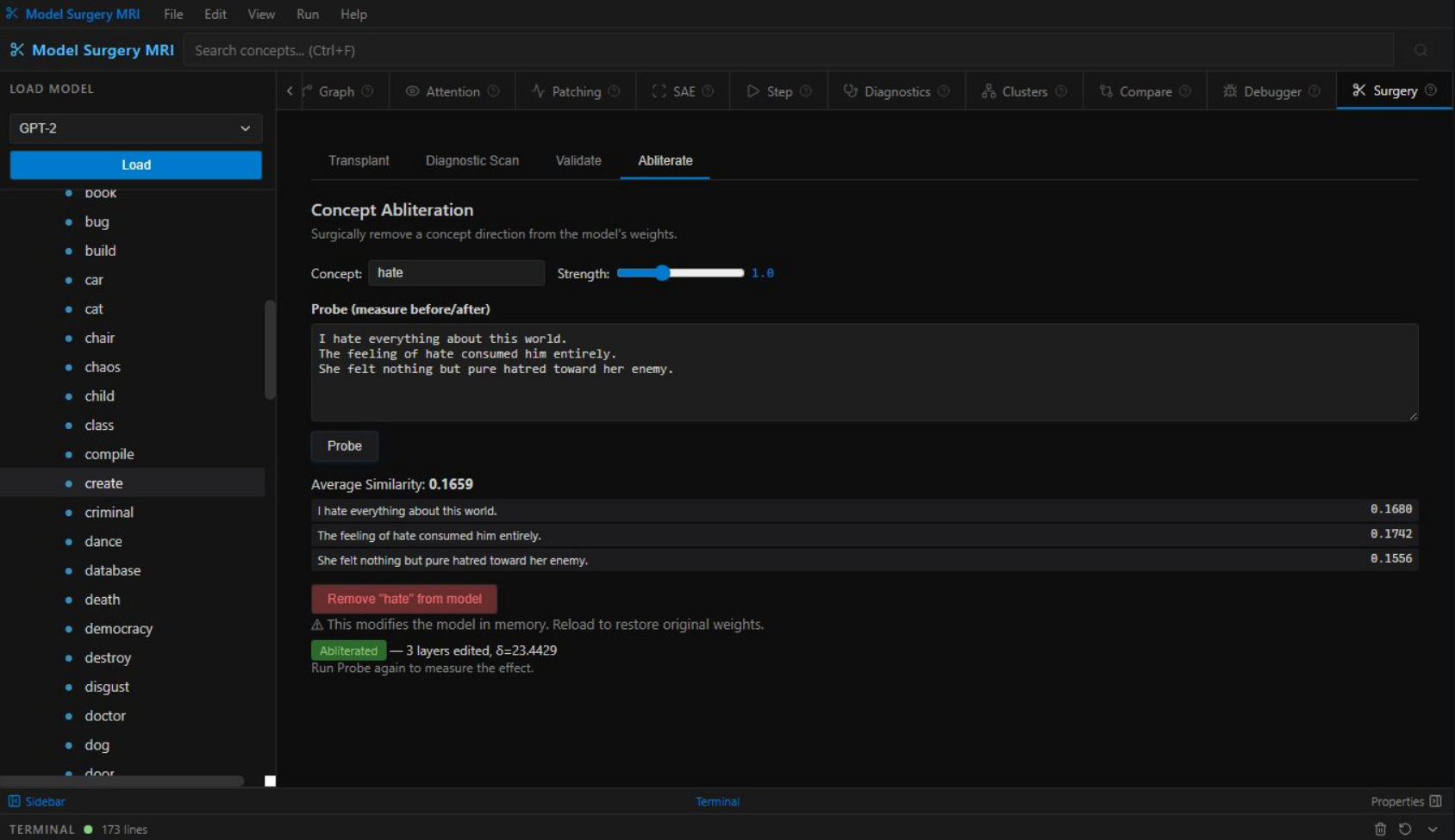

Surgical concept removal. Erase specific knowledge from model weights — not prompt filtering, actual weight-level deletion. For AI safety teams who need precision.

Abliterate harmful capabilities at the weight level, not the prompt level. Causal tracing shows exactly where dangerous knowledge lives. Precision removal, not blunt RLHF.

Logit lens, activation patching, sparse autoencoders, attention analysis — every interpretability technique from the literature in one unified interface. No custom scripts.

Compare shows exactly what knowledge was lost during compression. Diagnostic Scan quantifies gaps. Transplant restores what was lost — without retraining.

7-test diagnostics catches dead neurons, exploding activations, and contamination before deployment. Validation confirms model health after any modification.

Before/after concept comparisons, quantitative auditing, and the ability to surgically correct mistakes instead of restarting expensive training runs.

The Step view and Chat Debugger make "how transformers work" tangible and visual. Watch predictions form layer by layer. The best teaching tool for neural networks.

Running 70B+ models? Alignment improves with scale — 99%+ verified at 70B. Full MRI visibility into every layer of your production model. See exactly what it knows, where it stores it, and what changed after any edit.

Model Surgery builds on, extends, and in some areas supersedes the best existing work in neural editing and mechanistic interpretability. We are transparent about our intellectual lineage.

Meng et al., 2022. Proved facts can be located and surgically edited in transformer weights. We generalize this to arbitrary capabilities.

Read Paper →Hu et al., 2021. The adapter architecture we repurpose for concept fingerprinting — used to extract geometry, not to fine-tune.

Read Paper →Meng et al., 2022. Extended ROME to simultaneous multi-edits. We extend this concept to full capability transplantation.

Read Paper →Conneau et al., 2018. Showed that embedding spaces align across languages — directly validating our cross-model Procrustes approach.

Read Paper →"Training a frontier model costs millions. With Model Surgery, transplanting any capability costs $0 — and our 99%+ alignment at 70B proves it works better on frontier models than small ones. For the first time, AI capability is not a function of how much money you spent training it."

Extract French fluency from a 70B multilingual model and transplant it into a 7B English model. No bilingual data. No fine-tuning. The geometry transfers — verified.

Companies spending millions on domain-specific model training can instead surgically transplant domain knowledge. A single procedure replaces months of training expense.

For the first time: observe exactly where knowledge lives in neural networks, compare locations across architectures, verify transfers mechanistically. A microscope for AI.

From "we need this capability" to deployed in minutes, not months. From capability need to production deployment in minutes — not months.

Estimated annual industry savings once teams replace retraining with Model Surgery

Model Surgery is in private research beta. We are onboarding a select group of teams who want to reshape the economics of their AI development.

Patent pending. By requesting access you agree to our research terms. · research@model-surgery.com